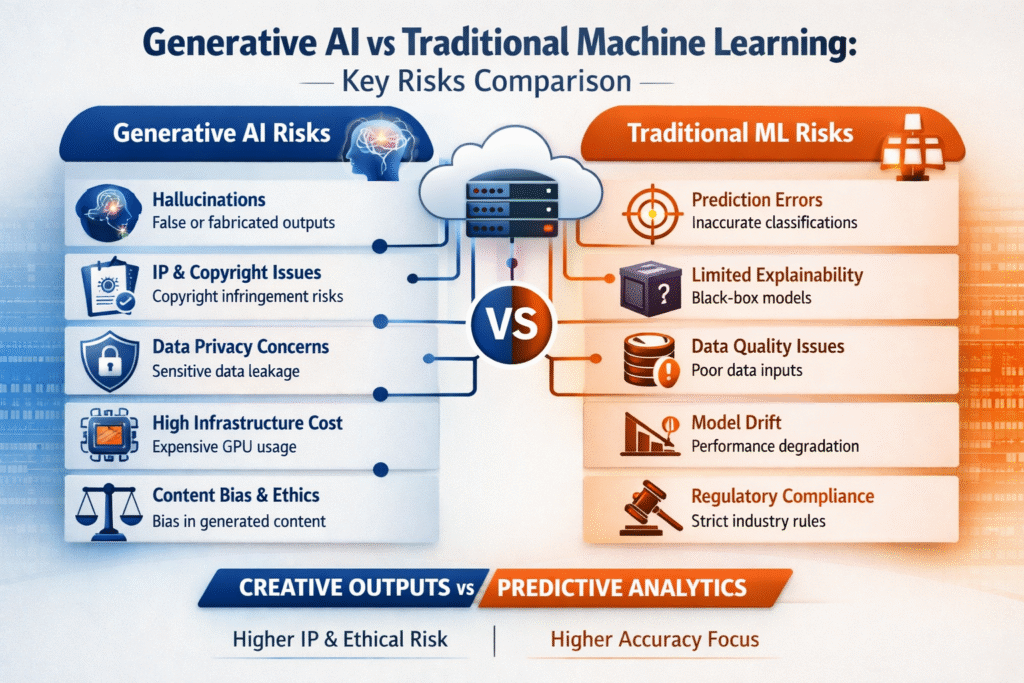

Generative AI and traditional machine learning (ML) share foundational principles, but their risk profiles differ significantly in scope, impact, and governance complexity. Traditional ML is largely predictive or classification-based, while generative AI produces new content — introducing broader operational and ethical exposure.

Below is a structured, enterprise-level comparison.

1. Core Functional Difference

| Aspect | Generative AI | Traditional Machine Learning |

|---|---|---|

| Primary Function | Creates new content (text, image, code) | Predicts, classifies, or detects patterns |

| Output Nature | Open-ended and creative | Structured and bounded |

| Risk Surface | Broad and unpredictable | Narrow and measurable |

2. Hallucination vs Prediction Error

Generative AI

Models may fabricate information confidently (hallucination). This is especially common in Large Language Models developed by organizations like OpenAI.

Risk Impact:

- False legal drafts

- Incorrect medical summaries

- Fabricated financial analysis

Traditional ML

Errors are typically misclassifications or inaccurate predictions.

Risk Impact:

- Incorrect fraud detection

- Misclassified loan applications

🔎 Key Difference:

Generative AI errors are often harder to detect because outputs appear coherent and plausible.

3. Data Privacy Risks

Generative AI

- Risk of memorizing sensitive training data

- Prompt injection vulnerabilities

- Leakage of proprietary information

Cloud deployments via platforms like Amazon Web Services or Microsoft Azure require strict governance.

Traditional ML

- Data leakage risk exists but is typically limited to structured datasets

- Less prone to free-form data exposure

🔎 Key Difference:

Generative AI interacts dynamically with user inputs, increasing attack vectors.

4. Bias and Ethical Exposure

Generative AI

Bias may appear in:

- Generated narratives

- Hiring recommendations

- Customer interactions

Because outputs are expressive, bias is more visible and reputationally damaging.

Traditional ML

Bias exists in:

- Risk scoring

- Credit decisions

- Predictive policing

However, outputs are numerical and often easier to audit statistically.

🔎 Key Difference:

Generative AI bias impacts public perception more directly.

5. Intellectual Property Risks

Generative AI

- May generate content resembling copyrighted works

- Legal ambiguity over AI-generated ownership

Traditional ML

- Rarely creates new expressive content

- Lower copyright exposure

🔎 Key Difference:

IP risk is significantly higher in generative AI systems.

6. Explainability and Transparency

Generative AI

- Complex transformer architectures

- Difficult to trace reasoning

- Outputs may not be reproducible

Traditional ML

- Models like decision trees and regression are easier to interpret

- Established explainable AI (XAI) frameworks

🔎 Key Difference:

Explainability challenges are more severe in generative AI.

7. Infrastructure and Cost Risk

Generative AI

- Requires large GPU clusters

- High inference cost

- Token-based usage pricing

Traditional ML

- Lower computational intensity

- Often CPU-based

- Cheaper deployment lifecycle

🔎 Key Difference:

Generative AI operational costs are significantly higher.

8. Security Threat Landscape

Generative AI

Unique risks include:

- Prompt injection attacks

- Jailbreaking attempts

- Adversarial manipulation

- Content abuse

Traditional ML

- Data poisoning

- Model inversion

- Adversarial examples

🔎 Key Difference:

Generative AI expands the threat surface into conversational and creative misuse.

9. Regulatory and Compliance Risk

Generative AI

- Under increasing regulatory scrutiny

- Concerns around misinformation and societal impact

- Emerging AI governance laws

Traditional ML

- Well-established regulatory oversight in finance and healthcare

- More mature compliance frameworks

🔎 Key Difference:

Generative AI regulation is still evolving and more uncertain.

10. Reputational Risk

Generative AI

Public-facing applications (chatbots, AI assistants) can:

- Generate offensive content

- Spread misinformation

- Damage brand trust

Traditional ML

Reputational damage is typically indirect (e.g., biased loan denial).

🔎 Key Difference:

Generative AI failures are more visible and viral.

Enterprise Risk Comparison Matrix

| Risk Category | Generative AI | Traditional ML |

|---|---|---|

| Hallucination | Very High | Low |

| Bias Impact | High | Medium |

| IP Risk | High | Low |

| Compliance Complexity | High | Medium |

| Infrastructure Cost | Very High | Moderate |

| Explainability | Low | Medium–High |

| Security Threat Surface | Broad | Moderate |

| Reputational Risk | Very High | Medium |

Strategic Implications for Enterprises

Generative AI introduces a broader and more complex risk landscape than traditional machine learning because it:

- Produces open-ended content

- Interacts dynamically with users

- Operates at large scale in public-facing environments

Traditional ML risks are often quantitative and bounded, while generative AI risks are qualitative and systemic.

Organizations adopting generative AI must implement:

- Strong governance frameworks

- AI risk assessment protocols

- Responsible AI policies

- Continuous model monitoring

- Legal and compliance oversight

Conclusion

While traditional machine learning risks focus on prediction accuracy and fairness, generative AI expands the risk surface to include misinformation, intellectual property disputes, ethical exposure, and high operational cost.

Enterprises must treat generative AI not as an incremental upgrade to ML but as a new category of intelligent systems requiring enhanced governance, security architecture, and regulatory alignment.

FAQ: Generative AI Risks vs Traditional Machine Learning Risks

1. What is the main difference between generative AI and traditional machine learning risks?

The primary difference lies in output behavior. Generative AI creates new content such as text, images, or code, which increases risks like hallucinations, misinformation, and intellectual property issues. Traditional machine learning primarily predicts or classifies data, so its risks are usually limited to accuracy errors, bias in predictions, and model performance issues.

2. Why is hallucination considered a major risk in generative AI?

Generative AI models can produce confident but incorrect or fabricated information. This is especially dangerous in industries like healthcare, finance, and legal services where factual accuracy is critical. Traditional ML models typically produce structured outputs, making errors easier to detect and measure.

3. Is generative AI more expensive to operate than traditional ML?

Yes. Generative AI systems require high-performance GPUs, large-scale cloud infrastructure, and continuous inference processing. Traditional ML models are generally less computationally intensive and more cost-efficient to deploy and maintain.

4. Which has higher compliance and regulatory risk?

Generative AI currently carries higher regulatory uncertainty because it can generate public-facing content that may include bias, misinformation, or copyrighted material. Traditional ML has more mature compliance frameworks, especially in finance and healthcare.

5. Are data privacy risks higher in generative AI?

In many cases, yes. Generative AI interacts dynamically with user prompts and may inadvertently expose sensitive training data if not properly governed. Traditional ML systems usually operate on controlled datasets with narrower exposure.

6. How does bias differ between generative AI and traditional ML?

Traditional ML bias typically affects decision-making outcomes such as credit scoring or risk assessment. Generative AI bias may appear in conversational outputs, content generation, or recommendations, making it more visible and reputationally impactful.

7. What are the intellectual property risks in generative AI?

Generative AI may create content similar to copyrighted materials if trained on public data sources. This raises questions about ownership and legal responsibility. Traditional ML rarely generates expressive content, so IP risks are significantly lower.

8. Which technology poses greater reputational risk?

Generative AI poses greater reputational risk because its outputs are often public-facing and creative. A single inappropriate or inaccurate response can spread quickly and damage brand trust. Traditional ML failures are usually internal and less visible.

9. How can enterprises reduce risks in generative AI adoption?

Organizations should implement:

- Strong AI governance frameworks

- Human-in-the-loop validation

- Continuous monitoring and auditing

- Responsible AI policies

- Secure cloud deployment strategies

These measures reduce operational, ethical, and regulatory exposure.

10. Is generative AI replacing traditional machine learning?

No. Generative AI complements traditional ML rather than replacing it. Predictive analytics, classification systems, and statistical models remain essential for structured decision-making, while generative AI enhances automation and creative workflows.