The volume of data produced each day has long since outpaced what classical computing can meaningfully process in real time. Quantum computing offers something fundamentally different — not merely faster hardware, but an entirely new model of computation built on the physics of quantum mechanics. For big data teams, this changes the equation completely.

Organizations across finance, logistics, healthcare, and retail are wrestling with datasets so large and complex that even the most powerful classical supercomputers hit hard walls — processing bottlenecks that no amount of additional RAM or CPU cores can fully resolve. Quantum computing doesn’t throw more hardware at the same problem. It reimagines how the problem is approached at the computational level, evaluating enormous solution spaces simultaneously rather than sequentially. In 2026, that capability is moving from laboratory benchmarks to genuine enterprise pilots with measurable outcomes.

This guide breaks down everything data professionals and business leaders need to know: how quantum systems process big data differently, which algorithms are driving real results, what industries are seeing early gains, and exactly how your organization can begin building a quantum-ready data strategy today.

01 — The Core Problem

Why Classical Computing Has Hit Its Big Data Ceiling

To understand what quantum computing offers big data processing, it helps to be precise about where classical systems actually fail. It isn’t raw speed alone — modern GPU clusters and distributed frameworks like Apache Spark handle enormous data volumes impressively. The limitation emerges at a specific intersection: when data problems are both high-dimensional and combinatorially explosive.

Consider a financial institution modeling systemic risk across thousands of interconnected assets, with correlations shifting in real time. Or a logistics network optimizing delivery routes across 50,000 daily shipments with dozens of real-time constraints. Or a genomics researcher clustering millions of genetic markers across patient cohorts. In each case, the solution space doesn’t just grow as variables increase — it grows exponentially. Classical computers must work through possible states one at a time, which is why Monte Carlo simulations take hours, route optimization converges on approximations rather than optimal solutions, and real-time fraud detection relies on simplified rule sets rather than full pattern analysis.

The fundamental constraint: Classical computing scales linearly with processor count. The hardest big data problems scale exponentially in complexity. Quantum computing addresses this mismatch by exploring exponential solution spaces in parallel — not by adding more classical processors.

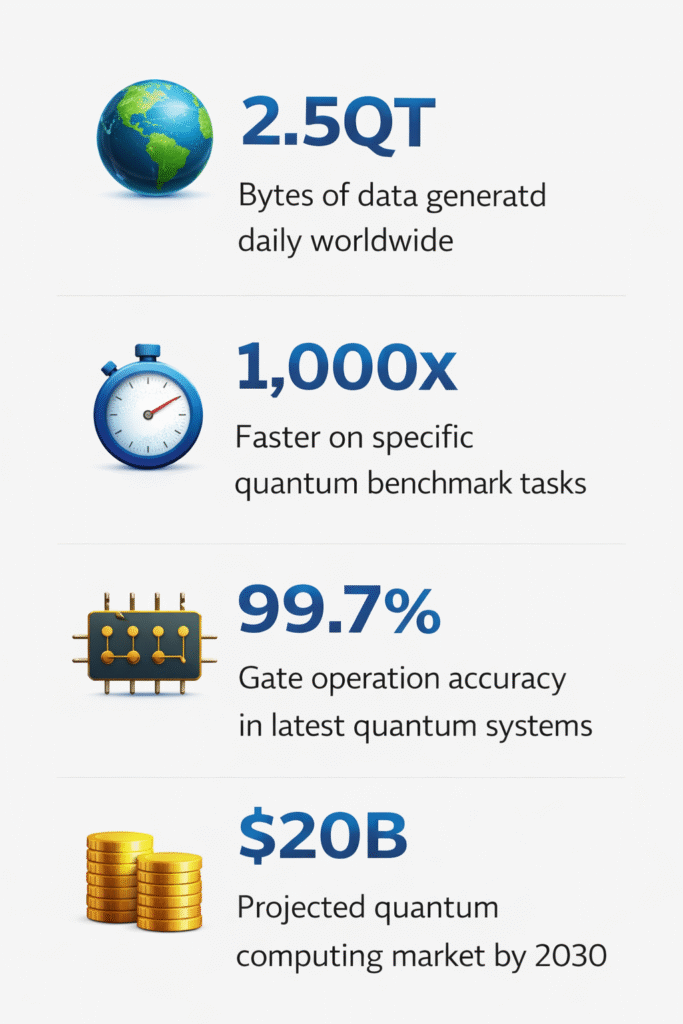

This computational ceiling isn’t theoretical. Verified 2026 The latest quantum systems achieve 99.7% accuracy in gate operations — up from 97% just eighteen months ago — and quantum processors now solve certain benchmark tasks 1,000 times faster than classical supercomputers. The gap between lab benchmarks and production enterprise workloads is narrowing measurably.

02 — Quantum Mechanics

How Quantum Systems Process Data Fundamentally Differently

Quantum computers don’t just run the same algorithms faster. They compute in an architecturally distinct way that makes certain classes of problems — precisely the ones that dominate hard big data challenges — tractable at a scale classical systems cannot match.

Superposition: Processing Many States at Once

A classical bit is always either 0 or 1. A qubit, through the principle of superposition, exists in a weighted combination of both states simultaneously until it’s measured. A system of just 50 qubits can represent 2⁵⁰ states — roughly one quadrillion — at the same time. For a big data processing system, this means exploring enormous datasets and solution spaces in parallel rather than iteratively.

Entanglement: Coordinated Multi-Variable Analysis

When qubits are entangled, their states become correlated — altering one instantly affects the other, regardless of physical separation. For data analytics, entanglement allows quantum systems to model correlations between thousands of variables simultaneously, without the dimensionality reduction trade-offs that classical ML techniques like PCA impose. You lose no signal during the analysis phase.

Interference: Amplifying Correct Paths

Quantum interference allows algorithms to amplify computational paths that lead toward correct answers while suppressing those that don’t. Think of ripples on water: waves from different sources either reinforce each other or cancel out. Quantum algorithms exploit this property to guide the system toward accurate solutions far more efficiently than brute-force classical search.

| Processing Challenge | Classical Approach | Quantum Approach |

|---|---|---|

| High-dimensional datasets (1,000+ features) | Requires dimensionality reduction — signal loss | Processes full feature space natively |

| Combinatorial optimization | Approximations via heuristics — suboptimal | Explores all paths simultaneously |

| Real-time pattern recognition | Bottlenecked by sequential processing | Parallel state evaluation — up to 1,000× faster |

| Multi-variable correlation analysis | Pairwise comparisons; loses higher-order correlations | Full correlation matrix via entanglement |

| Large-scale search tasks | O(N) linear search through dataset | O(√N) via Grover’s algorithm |

| Data encryption / security | RSA-vulnerable to quantum attacks | Quantum-resistant cryptographic options |

03 — Core Algorithms

Key Quantum Algorithms Driving Big Data Breakthroughs

Quantum’s advantage in big data processing is delivered through specific algorithms — each engineered to outperform classical alternatives on particular problem types. Understanding which algorithm fits which data challenge is fundamental to building an effective quantum analytics strategy.

Grover’s Search Algorithm

Searches unsorted datasets in O(√N) operations versus classical O(N). A dataset with one billion records that takes 1,000 seconds to search classically takes roughly 31 seconds with Grover’s. Critical for real-time fraud detection and large-scale pattern lookups.

Quantum Fourier Transform (QFT)

Exponentially faster than classical Fast Fourier Transform for signal decomposition and frequency analysis. Valuable for time-series analytics, IoT sensor data processing, and financial signal extraction from market data streams.

QAOA — Quantum Approximate Optimization Algorithm

Designed specifically for combinatorial optimization problems — routing, scheduling, portfolio construction. Used in logistics pilots and supply chain optimization. Runs effectively on near-term quantum hardware without requiring full fault tolerance.

Quantum Machine Learning (QML)

Combines quantum processing with classical ML pipelines. Quantum kernels and Quantum Support Vector Machines (QSVMs) accelerate training on high-dimensional datasets, enabling classification and clustering tasks that exceed classical ML’s practical limits.

Research milestone (2025–2026): Researchers deploying Grover’s and Shor’s algorithms on IBM Qiskit hardware using real-time Twitter feeds and transaction datasets demonstrated that quantum circuits specifically optimized for big data noise and volume significantly outperform classical equivalents on pattern recognition speed — a result published in Combinatorial Press’s Journal of Combinatorial Mathematics and Computer Science.

Real-World Examples: Quantum Big Data Processing in Action

Financial Services: From Monte Carlo to Quantum Simulation

Real-World Example

JPMorgan Chase — Quantum Derivative Pricing & Risk Modeling

JPMorgan Chase applied quantum algorithms to derivative pricing problems — specifically tasks where classical computers struggle when scaled to the full scope of market variables. The bank’s quantum pilots demonstrated faster scenario generation for stress testing and improved accuracy in multi-variable risk modeling. Early results show quantum-enabled Monte Carlo simulations running significantly faster than classical equivalents for the same level of statistical confidence.

Beyond JPMorgan, financial institutions broadly are using quantum tools to identify anomalous transaction patterns that classical anomaly detectors miss. Measured Result Quantum ML models that process full transaction feature sets — without classical dimensionality reduction — are reporting fraud detection accuracy improvements of up to 45% compared to classical baselines in pilot environments.

45%-Improvement in fraud detection accuracy

70%-Reduction in portfolio optimization processing time

35%-Higher accuracy in risk modeling pilots

Logistics & Supply Chain: Solving the Routing Explosion

Real-World Example

IBM × Commercial Vehicle Manufacturer — New York City Delivery Optimization

IBM partnered with a commercial vehicle manufacturer to optimize delivery routes across 1,200 New York City locations using a hybrid classical-quantum approach. Classical systems handled data ingestion and preprocessing; quantum optimization algorithms evaluated the combinatorial routing space simultaneously, converging on solutions that classical heuristics couldn’t match within the required decision window. The result was measurably more efficient routing at a scale that previously required significant approximation.

Real-World Example

Lisbon Transit Authority — Real-Time Quantum Bus Routing

A production pilot in Lisbon deployed quantum processors to dynamically reroute city buses based on live traffic and passenger demand data. The quantum system evaluated far more routing combinations than its classical counterpart within the same time window, improving on-time performance and reducing fuel inefficiency in ways that classical optimization could not replicate at that variable count and update frequency.

Healthcare & Pharma: Processing Quantum-Scale Biological Data

Real-World Example

Pharmaceutical Quantum-Classical Hybrid Pipelines — Drug Discovery Acceleration

Leading pharmaceutical companies are embedding quantum subroutines within classical research pipelines to simulate molecular interactions with precision that classical supercomputers cannot achieve within viable research timelines. A protein folding simulation that takes a classical supercomputer days to complete runs in hours using quantum subroutines — materially compressing drug discovery timelines and unlocking molecular structures that were previously computationally inaccessible. Beyond drug discovery, quantum ML models now cluster patient cohorts across thousands of clinical variables, enabling precision medicine recommendations that classical tools cannot surface.

05 — Architecture

The Hybrid Quantum-Classical Pipeline: How It Actually Works

A common misconception about quantum computing is that it will wholesale replace classical infrastructure. In reality — at least for the foreseeable enterprise horizon — quantum computing is most powerful as a precision co-processor embedded within classical big data architectures. The hybrid model is where real production value is being generated today.

Think of the relationship as analogous to how GPUs transformed machine learning workloads: GPUs didn’t replace CPUs, but they took ownership of the specific computations where their parallel architecture excelled — matrix multiplication, backpropagation, rendering. Quantum processors are entering the same role for an even harder class of problems.

Hybrid architecture flow: Classical systems handle data ingestion, storage, orchestration, and visualization. Quantum subroutines step in for feature engineering on high-dimensional data, combinatorial optimization, anomaly detection at full variable scale, and ML training acceleration. Outputs flow back to classical infrastructure for interpretation and action.

Practical Implementation Pattern

Organizations accessing quantum through IBM Qiskit or Amazon Braket construct quantum circuits that handle specific sub-tasks within existing pipelines. A Databricks or Snowflake environment, for example, can dispatch high-dimensional feature engineering jobs to a quantum processor via API, receive the enriched output, and continue the workflow in classical ML infrastructure — with no rebuild of existing tooling required.

Current Reality :Most production-grade quantum workloads in 2026 run through cloud quantum access layers — IBM Quantum Cloud, Amazon Braket, or Google Cloud Quantum AI — rather than on-premises hardware. Cloud access costs are comparable to standard high-performance compute, making meaningful experimentation accessible without capital infrastructure investment.

Quantum Machine Learning: Supercharging Big Data Analytics Models

Quantum Machine Learning (QML) sits at the most commercially significant intersection of quantum computing and big data. It doesn’t replace classical ML — it addresses the specific points where classical approaches hit their limits most painfully.

Quantum Kernel Methods

Classical kernel methods in ML map data into feature spaces where linear classifiers can operate on non-linear patterns. Quantum kernel methods use quantum circuits to perform this mapping in Hilbert space — a mathematical space of exponentially higher dimension than any classical equivalent. The result: complex data relationships that classical kernels simply cannot detect become visible, improving model accuracy on high-dimensional real-world datasets without requiring domain-specific feature engineering.

Quantum Neural Networks (QNNs)

QNNs use parameterized quantum circuits as the analog of classical neural network layers. These circuits can represent multi-dimensional data relationships with far fewer parameters than classical deep learning models — a significant advantage when training data is expensive or scarce. Early benchmarks show QNN training times that are meaningfully shorter than classical equivalents on specific structured datasets.

Quantum Data Fusion

One of the most distinctive QML capabilities for big data teams is quantum data fusion: using entanglement to process multiple simultaneous data streams — IoT sensors, transactional feeds, social signals — without the sequential processing bottlenecks that classical pipelines impose. Rather than ingesting streams one at a time and combining them downstream, quantum systems process multi-source data in entangled superposition, preserving the full cross-stream correlation structure for downstream analysis.

Enterprise benchmark: A financial analytics team processing 2,847-feature trading datasets with quantum-enhanced pattern recognition achieved classification speedups that classical systems could not match within the available computational budget — demonstrating that quantum advantage is already accessible at production feature-set scale.

07 — Sector Guide

Industry-Specific Quantum Big Data Applications Across Sectors

| Industry | Big Data Challenge | Quantum Solution | Measured Impact |

|---|---|---|---|

| Financial Services | Real-time risk across thousands of correlated assets | Quantum Monte Carlo, QAOA portfolio optimization | 35% higher modeling accuracy; 70% faster optimization |

| Logistics | Combinatorial routing across 50K+ daily shipments | D-Wave quantum annealing, QAOA routing | Near-optimal routing at scales classical can’t solve |

| Pharmaceutical | Molecular simulation; clinical cohort clustering | Quantum chemistry simulation; QML clustering | Protein folding: days → hours |

| Retail & E-Commerce | High-dimensional demand forecasting | QML classification on 1,000+ feature sets | Full-feature analytics without reduction trade-offs |

| Energy & Utilities | Grid balancing; renewable integration optimization | Quantum simulation of grid dynamics | Real-time balancing at constraint scales classical can’t match |

| Cybersecurity | Threat detection across massive network logs | Quantum-accelerated anomaly detection; Grover’s search | Faster threat identification; quantum-safe encryption adoption |

08 — Hardware Landscape

Quantum Hardware in 2026: What’s Available and What It Can Do

The quantum hardware landscape has matured significantly entering 2026. Key milestone The newest quantum error-correction methods now achieve near-theoretical accuracy — a breakthrough that addresses what has historically been the most significant barrier to scaling quantum systems beyond laboratory conditions. New surface codes require fewer physical qubits per logical qubit, and cryogenic isolation improvements reduce thermal interference that previously destabilized computations.

Major Platforms Available for Enterprise Big Data Work

IBM Quantum (Kookaburra): IBM’s flagship system delivers 1,386 qubits in a multi-chip architecture, scaling to over 4,000 qubits via quantum communication links. Accessible through IBM Qiskit cloud, with the most mature developer ecosystem and documentation. Best suited for ML algorithm development, optimization, and general-purpose quantum circuit experimentation.

Google Willow (105 qubits): Google’s Willow processor achieves exponential error suppression as encoded qubit arrays scale — a foundational research milestone. Accessible via Google Cloud’s quantum service. Best for teams on the cutting edge of error correction research and benchmarking.

Amazon Braket (Multi-vendor): AWS’s quantum platform provides access to IonQ, Rigetti, and D-Wave hardware plus powerful simulators — all within the familiar AWS ecosystem. Lowest barrier to entry for organizations already operating on AWS data infrastructure. Pay-as-you-go pricing makes pilot programs accessible without capital commitment.

D-Wave (Quantum Annealing): D-Wave’s annealing architecture is purpose-built for combinatorial optimization — logistics routing, scheduling, supply chain decisions. Among the earliest quantum systems deployed in actual production environments across industries. Not gate-based, but highly effective for the specific class of problems that dominate logistics and operations analytics.

09 — Honest Assessment

Current Limitations: What Quantum Big Data Can’t Do Yet

A credible assessment of quantum computing in big data requires acknowledging where limitations remain real and significant. The gap between controlled benchmarks and general-purpose enterprise production workloads is narrowing — but it hasn’t closed.

Data Loading Bottleneck (QRAM Problem)

Loading classical big data into a quantum system efficiently remains an unsolved engineering challenge. Classical data must be encoded into quantum states through a process called quantum random access memory (QRAM). Current QRAM implementations can offset some of the computational gains quantum algorithms provide, particularly for datasets where quantum speed-up is linear rather than exponential. This is an active research frontier, and practical solutions are emerging — but organizations should factor data loading overhead into any honest quantum performance projection.

Qubit Stability and Error Rates

Qubits remain sensitive to environmental disturbances — temperature fluctuations, electromagnetic noise, and physical vibrations can all collapse quantum states mid-computation. Despite the major advances in error correction reaching 99.7% gate accuracy in 2026, running long, complex big data computations without error accumulation still requires fault-tolerant systems that are not yet universally available at production scale.

Workforce and Skills Gap

Enterprise Risk Quantum computing requires new disciplines. Lack of skilled workforce, limited enterprise infrastructure, and the complexity of hybrid system design remain genuine adoption barriers. Organizations that don’t begin building quantum literacy now will face a steeper ramp when quantum becomes competitive table stakes.

Balanced outlook: Domain-specific, high-value quantum use cases — financial optimization, logistics routing, molecular simulation, fraud detection — are delivering measurable results today. Broad enterprise quantum advantage across general analytics workloads is a 2028–2031 horizon. The organizations winning long-term are those building hybrid infrastructure and workforce capability now.

10 — Enterprise Roadmap

How to Build Your Quantum Big Data Strategy: Step by Step

The organizations that will lead in quantum-enhanced big data processing aren’t necessarily the ones with the largest budgets — they’re the ones that start building the right foundations earliest. Here’s a practical, phased approach structured around where quantum delivers value today versus tomorrow.

1.Audit Your Hardest Data Problems (Now)

Map your current analytics bottlenecks to quantum-native problem types. Optimization problems (routing, scheduling, portfolio construction), high-dimensional pattern recognition (1,000+ features), real-time anomaly detection at scale, and large-scale search tasks are your highest-priority quantum candidates. Flag datasets where classical processing exceeds 4 hours or where approximation is currently degrading decision quality.

2.Start with Cloud Quantum Simulators (Q1–Q2 2026)

Deploy IBM Qiskit or Amazon Braket simulators to run proof-of-concept quantum algorithms on representative samples of your actual data. No hardware investment required. Cloud simulators provide realistic performance benchmarks on your specific workloads, validating which use cases justify further investment before committing resources.

3.Build Hybrid Pipeline Architecture (Q3–Q4 2026)

Design quantum-classical hybrid architectures where quantum subroutines own the most computationally intensive phases — feature engineering, optimization, clustering — and feed enriched outputs into your existing Snowflake, Databricks, or Spark infrastructure. This approach delivers quantum value without rebuilding proven classical systems.

4.Upskill Data Teams in Quantum Concepts

Senior data scientists don’t need physics PhDs — they need practical quantum algorithm literacy: what Grover’s, QFT, QAOA, and QML approaches do; how Qiskit circuits are structured; and how to architect hybrid pipelines. Structured learning programs bring experienced practitioners to productive quantum literacy in 4–8 weeks. Invest in this capability now; the talent competition for quantum-fluent data engineers will intensify sharply by 2028.

5.Migrate Sensitive Data to Quantum-Resistant Encryption

Quantum computing’s threat to classical RSA and elliptic curve encryption is real and timeline-driven. The “harvest now, decrypt later” attack vector — collecting encrypted data today to decrypt when quantum systems mature — is an active risk for any sensitive long-lived data. NIST has published quantum-resistant cryptographic standards. Begin auditing which of your analytics data stores need migration, and prioritize sensitive workloads for early transition.

6.Scale to Production Quantum Workloads (2027–2028)

As hardware stabilizes and error rates continue improving past the 99.9% threshold, graduate proven quantum pilots into production pipelines. By 2028, organizations with 18–24 months of hybrid architecture experience will be positioned to take full advantage of next-generation fault-tolerant quantum systems — while competitors are still at the experimentation stage.

11 — Security Implications

Quantum Computing and Big Data Security: Threat and Opportunity

Quantum computing’s relationship with data security is a double-edged dynamic that every big data team needs to understand clearly — because it cuts both ways simultaneously.

On the opportunity side, quantum-accelerated anomaly detection and pattern recognition will meaningfully strengthen cybersecurity analytics. Quantum systems analyzing network traffic logs at full feature scale — without the approximations classical anomaly detectors require — will identify threat patterns that current rule-based systems miss entirely. Quantum-enhanced threat intelligence is already being deployed in satellite networks and defense systems.

On the threat side, sufficiently powerful quantum computers will eventually render current RSA-2048 and elliptic curve encryption obsolete through Shor’s algorithm. The timeline is debated, but the risk is real enough that NIST finalized quantum-resistant cryptographic standards in 2024, and the post-quantum cryptography market reached $1.9B in 2025. The “harvest now, decrypt later” threat — where adversaries collect encrypted big data today to decrypt it when quantum systems mature — means the clock started running before your organization has deployed a single qubit.

Action required now: Audit which datasets in your big data environment are encrypted with RSA or elliptic curve algorithms. Prioritize long-lived sensitive data — customer records, financial history, proprietary models — for migration to NIST-approved quantum-resistant standards. This isn’t a future-state concern; it’s a present-day architecture decision.

12 — Looking Ahead

The Future of Quantum Big Data: 2026 Through 2030

The trajectory of quantum computing in big data processing through the end of the decade follows a clear arc — one that enterprise data leaders should plan around rather than react to.

Through 2027, the dominant model will be hybrid quantum-classical pipelines handling specific, high-value sub-tasks within classical big data architectures. IBM’s roadmap targets fault-tolerant quantum computing by 2029. Google and IonQ are pursuing similar milestones on overlapping timelines. As hardware crosses the fault-tolerance threshold, the set of computationally viable quantum workloads will expand substantially — from today’s optimization and pattern recognition use cases to broader data analysis, simulation, and inference tasks.

By 2030, industry-specific quantum computing environments — purpose-built for financial modeling, genomics, logistics optimization, and climate simulation — are likely to emerge as the highest-value quantum applications. McKinsey identifies chemicals, life sciences, finance, and mobility as the sectors with the highest near-term quantum value creation potential.

The competitive dynamic is already in motion. Organizations that treated quantum computing as a 2030 problem in 2023 are now realizing they have ground to make up. Those building quantum-ready data infrastructure and quantum-fluent teams in 2026 will hold a structural advantage that compounds over time — not just in processing capability, but in the organizational learning and algorithm development that turns raw quantum access into genuine business intelligence superiority.

The Bottom Line for DataBusinessCentral Readers

Quantum computing in big data processing has crossed from theoretical promise into measurable commercial reality. The proof is in the pilots: JPMorgan’s risk modeling gains, IBM’s routing optimization, Lisbon’s live transit system, pharma’s compressed drug discovery timelines. These aren’t projections — they’re reported outcomes from production-grade work in 2025 and 2026.

The question for data leaders isn’t whether quantum will transform big data processing. It’s whether your organization will be positioned to leverage that transformation when the window for first-mover advantage is still open. That window, based on the current hardware trajectory, runs through approximately 2028. Start building now.

Market Data

Quantum market (2025) -$3.5B

Quantum market -(2030)$20B

Quantum AI (2026). -$638M

Post-quantum crypto -$1.9B

IBM qubit scale. -4,158

Gate accuracy -(2026)99.7%

Related Articles

How Quantum Computing Transforms Data Analytics

Quantum Machine Learning: Enterprise Guide

Real-Time Big Data Streaming in 2026

ComparisonGrover’s Algorithm for Search Analytics

Quantum Fraud Detection in Banking

Key Algorithms

Grover’s Search. -O(√N)

QFT. -Exponential gain

QAOA. -Near-term ready

QML / QSVM -Production pilots

1 thought on “Quantum Computing in Big Data Processing: The Complete 2026 Guide”

read and leave your comments

which helps me to grow