Every decade or so, a technology arrives that fundamentally rewires the logic of business intelligence. Relational databases did it in the 1980s. Cloud computing reshaped it through the 2000s. Machine learning turbocharged the 2010s. Now, quantum computing is poised to be the next seismic shift — and unlike previous waves, its impact on data analytics is already beginning to materialize in real enterprise environments.

Data volumes are growing at a pace that traditional computing simply cannot absorb cleanly. Enterprises generate 2.5 quintillion bytes of data daily, yet the vast majority remains unanalyzed — trapped behind computational ceilings that classical processors cannot break through. Quantum computing offers a fundamentally different approach: rather than crunching through possibilities one by one, quantum systems explore millions of outcomes simultaneously. For data teams, this changes everything from how quickly a model can be trained to how deeply fraud can be detected in real time.

Key insight: According to McKinsey’s Quantum Technology Monitor 2025, the three-pillar quantum ecosystem — computing, communication, and sensing — could collectively generate tens of billions in commercial revenue by the mid-2030s. The analytics industry sits at the center of that value creation.

This article breaks down the quantum transformation for data and business intelligence professionals: what’s changing, what’s real versus hyped, and what your organization should be doing about it today.

01 — FUNDAMENTALSQuantum vs. Classical: Why It Changes Everything for Analytics

To grasp what quantum computing unlocks for analytics, it helps to understand what it does differently at its core. Classical computers encode all information as binary bits — a switch that is either off (0) or on (1). Every SQL query you’ve executed, every dashboard you’ve built, every model you’ve trained runs on this binary spine.

Quantum computers use qubits. Through a principle called superposition, a qubit can be 0, 1, or both simultaneously. Through entanglement, pairs of qubits become correlated such that altering one instantly influences the other. A third principle — interference — allows quantum systems to amplify correct computational paths while canceling incorrect ones. These three properties combined give quantum systems the ability to process and evaluate possibilities that would take classical computers years.

| Capability | Classical Computing | Quantum Computing |

|---|---|---|

| Processing Model | Sequential (bit by bit) | Parallel (many states simultaneously) |

| High-Dimensional Data | Requires dimensionality reduction (losing signal) | Processes natively without information loss |

| Optimization Problems | Approximations; rarely finds global optimum | Explores all solution paths simultaneously |

| Real-Time Pattern Detection | Bottlenecked by hardware throughput | Up to 232× faster on select classification tasks |

| ML Model Training | Hours-to-days on complex datasets | Minutes via Quantum Neural Networks |

| Anomaly Detection | Rule-based thresholds, limited nuance | Deep multi-variable correlation detection |

This isn’t theoretical. IBM’s Kookaburra processor delivers 1,386 qubits in a multi-chip configuration scaling to over 4,000 qubits. Fujitsu and RIKEN unveiled a 256-qubit machine in 2025, with a 1,000-qubit system planned for 2026. Google’s Willow processor achieves exponential error suppression as encoded qubit arrays grow — a foundational milestone for reliable production analytics workloads. The hardware is maturing rapidly, and analytics applications are becoming tangible.

Where Quantum Analytics Creates Measurable Business Value

🔐 Financial Services: Risk Modeling & Fraud Detection

Real-World Example

JPMorgan Chase — Quantum Predictive Modeling

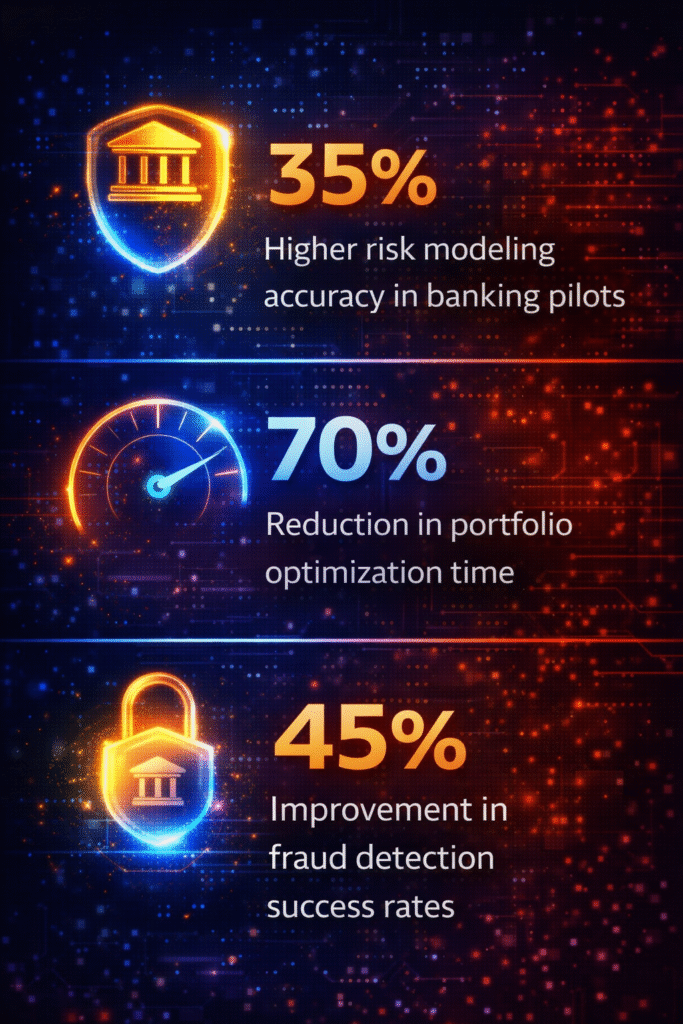

JPMorgan Chase has pioneered “certified randomness” generation through quantum circuits — foundational for cryptographic security and the acceleration of predictive model training. Bank-wide pilots report a 35% increase in risk modeling accuracy and a 70% reduction in portfolio optimization processing time, according to DASCA’s quantum analytics benchmarks.

Traditional risk models struggle when handling thousands of correlated variables across volatile markets. Quantum algorithms can hold entire correlation matrices in superposition, evaluating the optimal portfolio configuration in a single computational pass rather than brute-forcing millions of combinations sequentially. Fraud detection pilots are reporting accuracy improvements up to 45% because quantum systems detect subtle, multi-dimensional transaction patterns that classical anomaly detectors simply miss.

🚌 Logistics: Real-Time Route Optimization

Real-World Example

Lisbon Transit Authority — Quantum-Powered Bus Routing

A production pilot in Lisbon deployed quantum processors to reroute city buses using live traffic data in real time. The system outperformed classical optimization by evaluating a vastly larger combination of routing variables simultaneously — reducing inefficiencies and improving on-time rates in ways that classical solvers could not match within the available decision window.

Route optimization is fundamentally a combinatorial problem. As variables grow — traffic conditions, vehicle capacity, passenger demand, road events — the solution space grows exponentially. Classical systems make smart approximations. Quantum annealers like D-Wave’s systems explore the full solution space for near-optimal outcomes in a fraction of the time. For supply chain analytics teams, this means smarter inventory positioning, lower last-mile costs, and more accurate demand forecasting.

💊 Pharma & Healthcare: Molecular Simulation & Clinical Analytics

Real-World Example

Hybrid Quantum-Classical Drug Discovery Pipelines

Leading pharmaceutical companies are embedding quantum subroutines into classical research workflows to simulate molecular interactions with precision that classical systems cannot achieve within viable research timelines. Simulating a protein folding event can take classical supercomputers days; quantum subroutines reduce this to hours, materially accelerating drug discovery pipelines and clinical analytics capabilities.

Beyond drug discovery, quantum-enhanced machine learning models can identify patient cohorts with overlapping clinical risk profiles across thousands of variables — enabling personalized treatment recommendations that classical clustering algorithms cannot surface at that level of granularity.

📈 Retail: Demand Forecasting & Behavioral Analytics

Retail analytics teams have long battled the curse of dimensionality — when feature spaces grow past a few hundred variables, classical models begin performing worse, not better. Quantum algorithms naturally operate in high-dimensional spaces without information loss. A European financial services firm demonstrated this effect when analyzing trading data with 2,847 features, achieving classification speedups considered computationally impossible on classical hardware. For retail, the implication is demand forecasting that simultaneously incorporates weather, social sentiment, competitor pricing, inventory signals, and browsing behavior — without feature reduction trade-offs.

03 — ML & AIQuantum-Enhanced Machine Learning: What’s Actually Entering Production

One of the most immediately transformative near-term applications lies in machine learning — not replacing classical ML, but supercharging it. The Quantum AI market alone is projected to reach $638 million in 2026, reflecting the pace at which organizations are moving from pilot to partial production.

Quantum Neural Networks (QNNs) and Quantum Support Vector Machines (QSVMs) improve predicted performance on complex datasets by streamlining training for deep learning, regression, classification, and clustering models. These approaches use quantum circuits to represent variable relationships in entirely new ways — reducing training time and increasing model performance on high-dimensional data problems.

Key Quantum-ML Techniques Entering Production

Quantum Kernel Methods use quantum circuits to transform data into new feature spaces, enabling classical classifiers to capture complex relationships that would otherwise require prohibitive compute. This is particularly effective in noisy or high-dimensional environments like financial time-series data.

Quantum Dimensionality Reduction replaces classical techniques like PCA and t-SNE, which eliminate important data correlations during feature compression. Quantum algorithms preserve complex interdependencies during transformation, resulting in richer downstream models with higher predictive accuracy.

Quantum Data Fusion uses entanglement to process multiple data streams simultaneously — IoT sensors, transactional systems, and social feeds — without the sequential processing bottlenecks of classical pipelines. For real-time analytics platforms processing continuous high-volume feeds, this is a step-change in what’s technically achievable.

Business impact: Teams using quantum-enhanced visualization and analytics tools report 40% faster insight discovery and 60% improved stakeholder comprehension of complex analytical outputs, according to BQPSim enterprise deployment benchmarks.

04 — MARKETCompetitive Landscape: Who’s Shaping Quantum Analytics

The quantum computing ecosystem has evolved from academic labs to a multi-billion-dollar commercial arena. Understanding which players are building analytics-relevant quantum capabilities is critical for enterprise technology leaders making investment decisions today.

IBM

IBM Quantum — Kookaburra Processor

IBM’s Kookaburra features 1,386 qubits in a multi-chip architecture, scaling to 4,158 qubits via quantum communication links. Accessible through IBM Qiskit cloud platform, giving enterprise analytics teams a way to run quantum algorithm pilots without on-premise hardware investment.

GGL

Google Willow — Exponential Error Suppression

Google’s 105-qubit Willow processor achieves exponential error suppression as encoded qubit arrays scale — a foundational milestone for reliable quantum analytics production workloads. Accessible via Google Cloud’s quantum computing service.

AWS

Amazon Braket — Cloud-Native Multi-Vendor Access

Amazon Braket provides access to multiple quantum hardware providers (IonQ, Rigetti, D-Wave) plus simulators. For analytics teams already in the AWS ecosystem, it’s the most accessible entry point for quantum algorithm experimentation integrated within existing data pipelines.

DWV

D-Wave — Quantum Annealing for Optimization

D-Wave specializes in quantum annealing, particularly suited to combinatorial optimization — logistics routing, production scheduling, supply chain analytics. D-Wave systems are among the earliest deployed in real production environments across multiple industries.

05 — ANALYSISPlatform Gap Analysis & Strategic Solutions

Analyzing where established analytics platforms currently fall short reveals precisely where quantum computing fills the gap most urgently — and where your integration investment will generate the highest near-term return.

Classical Analytics Platforms vs. Quantum Capabilities: Gap Analysis

- Tableau / Power BI:Excellent for descriptive analytics and visualization but fundamentally limited to pre-aggregated structured datasets. Quantum-enhanced preprocessing and real-time multi-source data fusion will unlock richer live data layers these tools cannot currently ingest at speed.Solution: Integrate quantum-classical hybrid preprocessing pipelines upstream of BI visualization layers.

- Snowflake / Databricks:Leading cloud data platforms with strong ML capabilities, but classical compute constraints limit performance on ultra-high-dimensional datasets.Solution: Embed quantum subroutines for feature engineering and dimensionality reduction tasks, feeding enriched outputs into existing Snowflake or Databricks workflows.

- AWS SageMaker / Google Vertex AI:Model training pipelines bottleneck on large, complex, unstructured datasets. Quantum Neural Networks can pre-train feature representations that classical fine-tuning then specializes more efficiently.Solution: Adopt hybrid quantum-classical training pipelines for high-dimensional classification tasks, using Braket or Qiskit for the quantum phases.

- Classical Fraud Detection Systems:Rule-based thresholds and classical anomaly models miss subtle, multi-variable fraud patterns in real time.Solution: Deploy quantum-enhanced anomaly detection layers for high-value transaction streams, accessible via IBM Qiskit or Amazon Braket APIs, without replacing existing infrastructure.

- Supply Chain & ERP Analytics:Route and inventory optimization using classical solvers leave meaningful efficiency on the table due to combinatorial complexity limits.Solution: Integrate D-Wave quantum annealing into the optimization layers of existing ERP analytics stacks for production-grade improvement.

Strategic Takeaway: Quantum computing doesn’t displace your existing analytics stack — it extends it. The near-term winning posture is a hybrid architecture: quantum algorithms handling the most computationally intensive sub-tasks, feeding outputs into classical infrastructure your teams already operate effectively.

How to Prepare Your Organization: A Practical Roadmap

The organizations that win in the quantum era won’t necessarily be the first to run a quantum algorithm — they’ll be the ones that built quantum-ready data pipelines, hybrid infrastructure, and upskilled analytics teams before quantum reached full production scale. The right time to start is now.

1.Identify Your Quantum-Priority Problems (Now)

Map your analytics bottlenecks to quantum-native problem types: optimization, high-dimensional pattern recognition, real-time multi-source data fusion. Flag datasets with 1,000+ features or processing times exceeding 4 hours as prime candidates for quantum pilot programs.

2.Begin With Cloud-Based Quantum Simulators (Q1–Q2 2026)

Use IBM Qiskit or Amazon Braket simulators to run proof-of-concept quantum algorithms on your data without hardware investment. These platforms offer cost-effective entry points for analytics teams to build quantum literacy and validate use case viability before committing further resources.

3.Build Hybrid Pipelines (Q3–Q4 2026)

Design quantum-classical hybrid architectures where quantum subroutines handle computationally intensive phases — optimization, feature engineering, anomaly detection — while classical systems handle orchestration, storage, and visualization in the ways your teams already know.

4.Upskill Your Analytics Teams in Quantum Concepts

Data analysts don’t need to become quantum physicists. But familiarity with Qiskit, quantum circuit design basics, and key quantum algorithm categories (annealing, QNN, QSVM) will be table stakes for senior analytics roles within 3–5 years. Investing in structured training programs now creates durable competitive advantage.

5.Address Post-Quantum Security in Your Data Architecture

Quantum computing doesn’t just power analytics — it threatens existing encryption standards. The post-quantum cryptography market is valued at $1.9B in 2025, driven by “harvest-now, decrypt-later” attack vectors. Audit your data security architecture and begin migrating sensitive analytics workloads to quantum-resistant algorithms.

07 — CONCLUSIONThe Analytics Imperative Is Quantum

Quantum computing’s impact on data analytics is transitioning from theoretical promise to measurable commercial reality in 2026. The global quantum computing market reached $1.8–3.5 billion in 2025, with aggressive forecasts pointing to $20 billion by 2030. Governments worldwide have committed billions in national quantum initiatives. Enterprise giants — from JPMorgan to Volkswagen to leading pharma companies — are already running production-grade pilots that are generating measurable business outcomes.

For data and analytics professionals, the message isn’t that you need to master quantum mechanics today. It’s that the computational ceiling of classical analytics — the one that forces you to reduce features, approximate optimizations, and batch-process data that should be real-time — has a replacement technology actively maturing. Organizations that understand where quantum excels, build hybrid architectures bridging both paradigms, and cultivate quantum literacy in their analytics teams now will define who leads in data-driven decision-making through the next decade.

Bottom line for DataBusinessCentral readers: Quantum computing isn’t the end of classical analytics — it’s the beginning of what analytics can truly become. The organizations that start their quantum analytics journey in 2026 won’t just keep pace with competitors. They’ll define the new ceiling everyone else tries to reach.

🔗 Related Articles

Generative AI in Business Intelligence 2026Real-Time Data Streaming: Complete GuideCloud Data Warehouse Comparison 2026Best ML Platforms for Enterprise TeamsPredictive Analytics FrameworksData Governance in the AI EraPost-Quantum Security: Enterprise RoadmapSolving Big Data Processing Bottlenecks

📊 Market Snapshot

Market Size (2025)-$3.5B

Market Size (2029-)$5.3B

Market Size (2030)-$20.2B

CAGR-32.7%

IBM Qubit Scale-4,158

US Gov. Investment-$2.5B