What Is Edge Computing?

Edge computing is a distributed computing paradigm that brings computation and data storage physically closer to the sources of data — the “edge” of the network — rather than relying on a centralized data center or cloud far away.

The term “edge” refers to the geographic and network edge: devices like sensors, cameras, routers, gateways, and local servers that exist in factories, hospitals, vehicles, retail stores, and homes.

📡 Core Idea

Instead of sending raw data thousands of miles to a cloud server, process it right where it’s generated. Send only results, insights, or anomalies upstream.

Why Did Edge Computing Emerge?

Three forces drove the rise of edge computing:

⚡ Latency Demands

Applications like autonomous vehicles and real-time control systems cannot tolerate the 50–200ms round-trip to a distant cloud.

📊 Data Volume Explosion

IoT devices generate petabytes daily. Sending all of it to the cloud is expensive, slow, and often unnecessary.

🔒 Privacy & Sovereignty

Regulations like GDPR restrict cross-border data movement. Processing locally avoids compliance risks.

📡 Connectivity Gaps

Remote sites — mines, ships, aircraft — have unreliable or expensive WAN links. Local processing keeps operations running offline.

Edge vs. Cloud vs. Fog

These three terms are often confused. Understanding where each sits in the data processing hierarchy is foundational.

| Dimension | Cloud Computing | Fog Computing | Edge Computing |

|---|---|---|---|

| Location | Remote data centers | Local network nodes | Device / on-premise |

| Latency | High (50–200ms+) | Medium (10–50ms) | Very low (<5ms) |

| Compute Power | Very High | Medium | Low–Medium |

| Bandwidth Cost | High | Medium | Low |

| Data Privacy | Risk (leaves premises) | Partial control | Stays on-site |

| Offline Operation | No | Partial | Yes |

| Typical Use | Analytics, storage, AI training | Building/campus networks | Real-time sensing, control |

💡 Key Distinction

Fog computing is a Cisco-coined term for a layer between cloud and edge — often a local gateway or mini-server. Edge computing is broader, sometimes including fog, and always means moving compute closer to data sources.

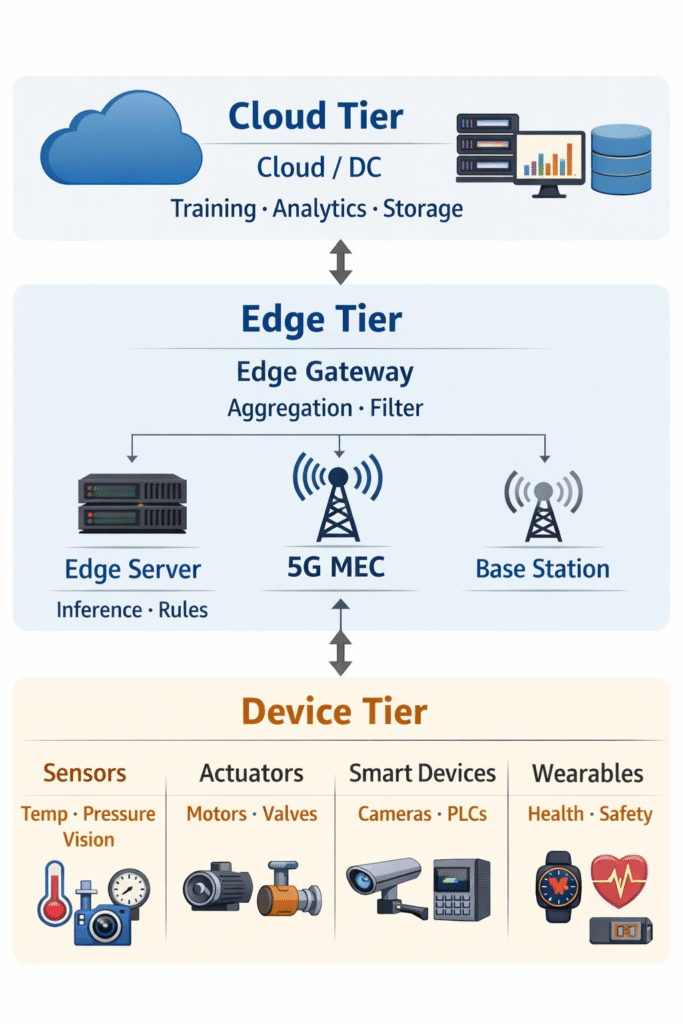

Core Architecture

A typical edge deployment follows a three-tier hierarchy: devices at the bottom, edge nodes in the middle, and cloud at the top.

Edge Node Components

An edge node (also called an edge server, gateway, or MEC node) typically contains:

- ▸Compute: ARM/x86 CPU, sometimes FPGA or GPU for inference

- ▸Memory & Storage: Local RAM + SSD for buffering and caching

- ▸Connectivity: Ethernet, Wi-Fi, 4G/5G, LoRaWAN, or RS-485 for legacy

- ▸Software Stack: Containerized workloads (Docker/K3s), OS (Linux-based), ML runtimes

- ▸Security Module: TPM chip, hardware encryption, secure boot

Protocols & Standards

Edge computing relies on a stack of protocols for device communication, data transport, and management.

| Protocol | Layer | Use Case |

|---|---|---|

| MQTT | Application | Lightweight pub/sub for IoT sensors over low-bandwidth links |

| AMQP | Application | Reliable message queuing between edge and cloud |

| OPC-UA | Application | Industrial automation — PLC and SCADA integration |

| CoAP | Application | Constrained devices (microcontrollers, battery-powered nodes) |

| gRPC | Application | Fast RPC between edge microservices |

| 5G / LTE | Transport | Wide-area wireless connectivity for mobile edge nodes |

| LoRaWAN | Transport | Long-range, low-power for agricultural/remote sensors |

| TLS 1.3 | Security | Encrypted communication between all tiers |

// Example: MQTT message from a temperature sensor

// Publisher (Edge Device → Edge Broker)

client.publish("factory/zone-3/temperature", JSON.stringify({

device_id: "sensor-042",

value: 74.3,

unit: "celsius",

timestamp: Date.now(),

quality: "good"

}), { qos: 1, retain: false });

// Subscriber (Edge Node processing logic)

client.subscribe("factory/zone-3/+", (topic, message) => {

const data = JSON.parse(message);

if (data.value > 80) triggerLocalAlert(data.device_id);

else forwardToCloud(data); // only anomalies go upstream

});Industry Use Cases

Edge computing has found deep adoption across industries where real-time processing and local autonomy matter most.

- 🏭Manufacturing: Predictive maintenance on CNC machines; quality inspection via edge AI cameras; OT/IT integration

- 🚗Autonomous Vehicles: LiDAR/camera fusion, lane detection, and obstacle avoidance with sub-10ms latency

- 🏥Healthcare: Patient vitals monitoring with on-device alerting; medical imaging inference in hospitals with no cloud dependency

- 🏬Retail: Real-time shelf analytics, footfall heatmaps, automated checkout via edge computer vision

- ⚡Energy & Utilities: Smart grid load balancing, pipeline leak detection, renewable energy micro-grid control

- 🌾Agriculture: Drone-based crop monitoring, soil sensors with offline analytics in remote fields

- 🏙️Smart Cities: Traffic signal optimization, public safety camera analytics, waste management routing

- 🎮Gaming / XR: Multi-access Edge Computing (MEC) for cloud gaming and AR with near-zero latency

Intermediate Concepts

Edge AI & TinyML

Edge AI refers to running machine learning models directly on edge devices or nodes — without cloud round-trips. This uses lightweight model formats like ONNX, TensorFlow Lite, and OpenVINO, often quantized from INT32 to INT8 or even binary precision.

TinyML pushes further — running inference on microcontrollers with as little as 256KB of RAM. Tools like Edge Impulse and TensorFlow Lite Micro enable this.

⚠ Trade-off

Quantized models on edge devices sacrifice some accuracy (~1–3%) for a 4× reduction in size and 2–4× inference speedup. Always benchmark your use case.

Containerization at the Edge

Modern edge deployments use containers to package and deploy workloads consistently across thousands of heterogeneous nodes.

// K3s (lightweight Kubernetes) — deploy an inference workload

apiVersion: apps/v1

kind: Deployment

metadata:

name: vision-inference

spec:

replicas: 1

selector:

matchLabels:

app: vision

template:

metadata:

labels:

app: vision

spec:

nodeSelector:

node-role: edge-gpu # target edge node with GPU

containers:

- name: inference

image: myregistry/yolov8-edge:latest

resources:

limits:

nvidia.com/gpu: 1 # GPU passthrough

volumeMounts:

- name: camera-feed

mountPath: /dev/video0Multi-Access Edge Computing (MEC)

MEC, standardized by ETSI, embeds compute nodes inside the 5G/4G radio access network — at base stations or aggregation points. This brings cloud-like APIs within 1–5ms of mobile devices, enabling use cases like AR/VR offloading, V2X (Vehicle-to-Everything), and real-time video analytics for telcos.

Federated Learning at the Edge

Instead of sending raw data to the cloud for ML training, federated learning trains models locally on each edge node and sends only model weight gradients to a central aggregator. This preserves privacy, reduces bandwidth, and enables continuous on-device learning.

💡 Business Value

A hospital using federated learning across 50 devices improves diagnostic models without a single patient record ever leaving the facility — satisfying HIPAA, GDPR, and institutional privacy policies simultaneously.

Edge Orchestration & GitOps

Managing thousands of edge nodes manually is impossible. Modern platforms use GitOps workflows — infrastructure and application state defined as code in Git repos, automatically synced to edge nodes by agents like FluxCD or ArgoCD running on K3s clusters. Platforms like Azure IoT Edge, AWS Greengrass, and Eclipse ioFog provide this at scale.

Challenges & Trade-offs

| Challenge | Description | Mitigation |

|---|---|---|

| Security Surface | Thousands of physical nodes are harder to patch and can be physically tampered with | TPM, secure boot, zero-trust networking, OTA signing |

| Heterogeneity | Mixed ARM/x86 hardware, OS variants, and connectivity types | Containerization, hardware abstraction layers |

| Resource Constraints | Limited CPU, RAM, and power at the device tier | Model quantization, workload offloading decisions |

| Observability | Distributed logs, metrics, and traces across remote nodes | Lightweight agents (Prometheus+Grafana), log aggregation |

| Data Consistency | Offline nodes create stale or divergent local state | CRDTs, event sourcing, conflict resolution policies |

| Cost Complexity | Hardware CAPEX + management overhead can exceed cloud savings | ROI modeling; edge-only for high-data/latency-sensitive flows |

Key Platforms & Tools

🟢 AWS Greengrass

Run Lambda functions and containers on edge devices. Deep AWS service integration. Best for AWS-native shops.

Deploy Azure AI/ML modules to edge. Strong on enterprise OT/IT convergence and hybrid cloud scenarios.

☸️ K3s / KubeEdge

Lightweight Kubernetes for resource-constrained edge nodes. KubeEdge extends K8s APIs to the device tier.

⚙️ Eclipse ioFog / Edgex

Open-source frameworks for edge microservice orchestration and IoT device protocol normalization.

NVIDIA Jetson

GPU-accelerated edge AI hardware. Runs full deep learning inference (YOLO, BERT-tiny) at the edge.

Fleet management for edge Linux devices. OTA updates, remote access, container rollout at scale.

📘 Next Steps

From here, the natural progression is edge security hardening (zero-trust, certificate rotation), time-series data pipelines (InfluxDB at edge), and edge-native application design patterns — all covered in upcoming DataBusinessCentral deep dives.

Edge computing has moved well past the experimental phase — it is now a foundational layer of modern data infrastructure. By distributing compute to where data is born, organizations unlock real-time responsiveness, reduce bandwidth overhead, and maintain operations even when cloud connectivity fails.

From understanding the basic three-tier architecture to deploying containerized workloads with K3s, running federated learning across distributed nodes, or pushing AI inference onto low-power hardware with TinyML, the edge computing stack rewards engineers who invest in its fundamentals. The intermediate practitioner knows that the right architecture is never edge-only or cloud-only — it is a deliberate balance, driven by latency requirements, data volume, regulatory constraints, and cost.

As 5G networks mature and edge hardware becomes more capable and affordable, the line between cloud and edge will continue to blur. Businesses that build edge literacy today are positioning themselves to run faster, leaner, and more resilient operations tomorrow. The next steps: harden your edge security posture, design for offline-first data flows, and explore time-series pipelines purpose-built for constrained environments.