KEY TAKEAWAYS

- Data bias limits global scalability: AI models trained exclusively on Western datasets struggle to interpret regional dialects, cultural nuances, and localized visual cues.

- Video data multiplies complexity: Moving from text to video moderation requires multimodal data processing (audio, visual, and behavioral) to accurately assess intent.

- False positives cost businesses: Over-reliance on context-blind keyword filters leads to the censorship of legitimate users, increasing churn and damaging brand trust.

- Localized data governance is the solution: Building ethical AI requires curating diverse datasets and implementing Human-in-the-Loop (HITL) systems to capture regional context.

The volume of user-generated content (UGC) being uploaded to digital platforms every second has far surpassed human processing capacity. To manage this massive influx of data, businesses rely heavily on Artificial Intelligence and Machine Learning models to govern digital spaces, moderate content, and protect brand safety.

However, as these automated systems scale globally, a critical flaw in their foundational data architecture is being exposed. The algorithms are powerful, but they are fundamentally context-blind.

When businesses deploy AI models trained on narrow, culturally homogenous datasets into diverse global markets, the results are often disastrous. To build the next generation of ethical, effective AI, we must shift our focus from algorithmic brute force to data nuance.

The Problem with “Proxy” Training Data

At the heart of machine learning is a simple truth: an AI model is only a reflection of its training data.

Historically, the vast majority of Large Language Models (LLMs) and computer vision systems have been trained on data scraped primarily from North American and European internet traffic. This data encodes specific cultural norms, slang, visual aesthetics, and behavioral baselines.

When a global platform deploys these standard models to moderate users in Africa, Southeast Asia, or Latin America, the AI uses its Western training data as a “proxy” for normal behavior. Because the system lacks the localized data required to understand regional dialects, code-switching, or cultural gestures, it resorts to rigid keyword filtering.

This creates a dual crisis in digital Trust & Safety:

- The False Positive Epidemic: Harmless colloquialisms, local slang, or educational content are mislabeled as aggressive or policy-violating, silencing legitimate users.

- The Blind Spot: Truly malicious actors bypass the system by using regional terminology or visual cues that the AI’s training data simply does not recognize.

The Complexity of Multimodal Video Data

This context gap is significantly amplified when analyzing video. Unlike static text, video is a rich, dense stream of multimodal data.

To accurately determine if a video violates a platform’s safety guidelines, an AI must analyze the interplay between what is being said, what is being shown, and how it is being presented. For example, a video showing a physical altercation might be flagged by a basic computer vision model as “violent.” However, if the audio data reveals the context is a sanctioned martial arts competition or a news report, the intent is entirely different.

Processing this level of nuance requires highly sophisticated data engineering. It requires models that do not just identify objects, but synthesize relationships between audio signals, visual framing, and cultural norms.

The Business Case for Ethical Data Strategy

For digital platforms, marketplaces, and social networks, content moderation is not just a regulatory hurdle; it is a core component of user retention and business transformation.

When an AI system frequently makes context-blind errors, it erodes the user experience. Creators whose content is unfairly restricted will migrate to competing platforms, taking their audiences (and associated revenue) with them. Conversely, platforms that fail to catch nuanced, localized threats face severe reputational damage and potential regulatory fines.

Investing in context-aware data architecture is no longer a theoretical exercise in AI ethics; it is a critical business strategy for global scalability.

Moving Toward Context-Aware AI Architecture

How can data leaders and engineers build systems that respect nuance? The answer lies in localized data governance and continuous learning models.

1. Curating Representative Datasets

Businesses must actively invest in gathering and annotating training data that reflects the specific regions they serve. This means moving beyond generic open-source datasets and partnering with local data providers to capture regional languages, pidgins, and cultural visual markers.

2. Implementing Human-in-the-Loop (HITL) Pipelines

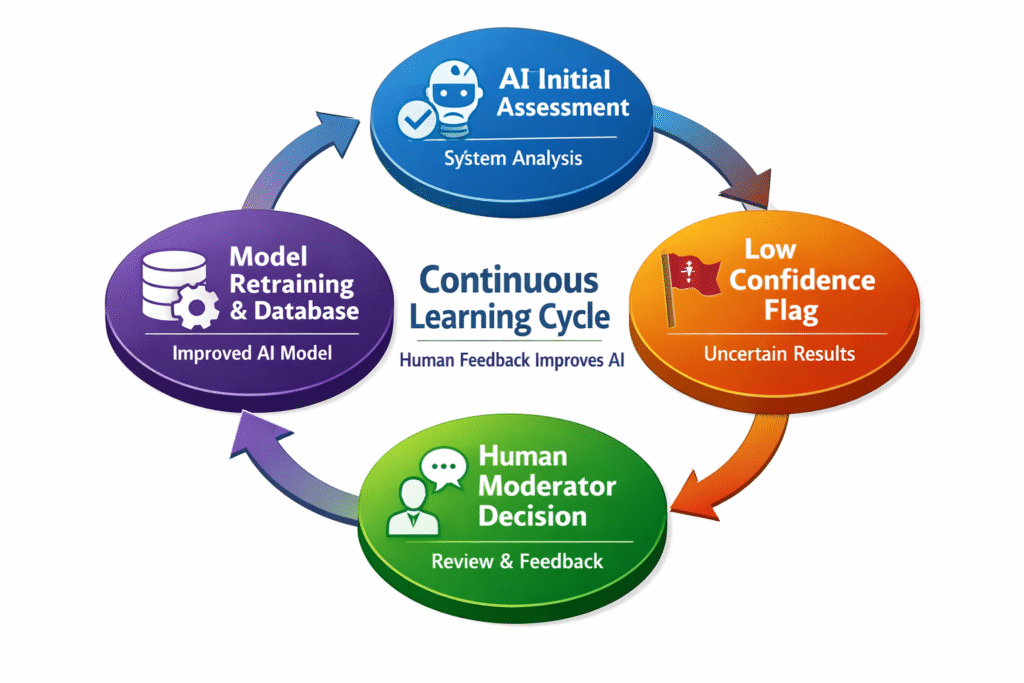

AI should not operate in a vacuum. The most resilient data pipelines utilize Human-in-the-Loop methodologies. When an AI encounters an edge case it cannot confidently resolve based on its training data, the content is routed to a localized human moderator.

The human moderator’s decision is then fed back into the system, continuously enriching the model’s understanding of local context.

3. Shifting from Keywords to Intent Modeling

Data strategies must evolve from simple keyword matching to intent modeling. By training models on the semantic relationships between words and visual cues within a specific cultural framework, AI can learn to differentiate between a harmless local joke and a genuine threat.

Conclusion

The future of data-driven business relies on trust. As AI assumes a larger role in governing our digital interactions, we must ensure these systems are equipped to understand the full spectrum of human communication. By prioritizing culturally nuanced data and investing in context-aware architectures, we can build a safer, more inclusive digital ecosystem that scales across borders without sacrificing local identity.

ABOUT THE AUTHOR

Pride Chamisa is the Founder of VidSentry, an AI-powered video moderation platform dedicated to building safer digital spaces through context-aware machine learning. Specializing in AI safety, data nuance, and the intersection of global technology and African contexts, Pride builds infrastructure that helps platforms scale Trust & Safety without sacrificing cultural accuracy.

External Links:

- Connect on LinkedIn: https://www.linkedin.com/in/chamisapride

- Learn more about context-aware AI: https://vidsentry.com