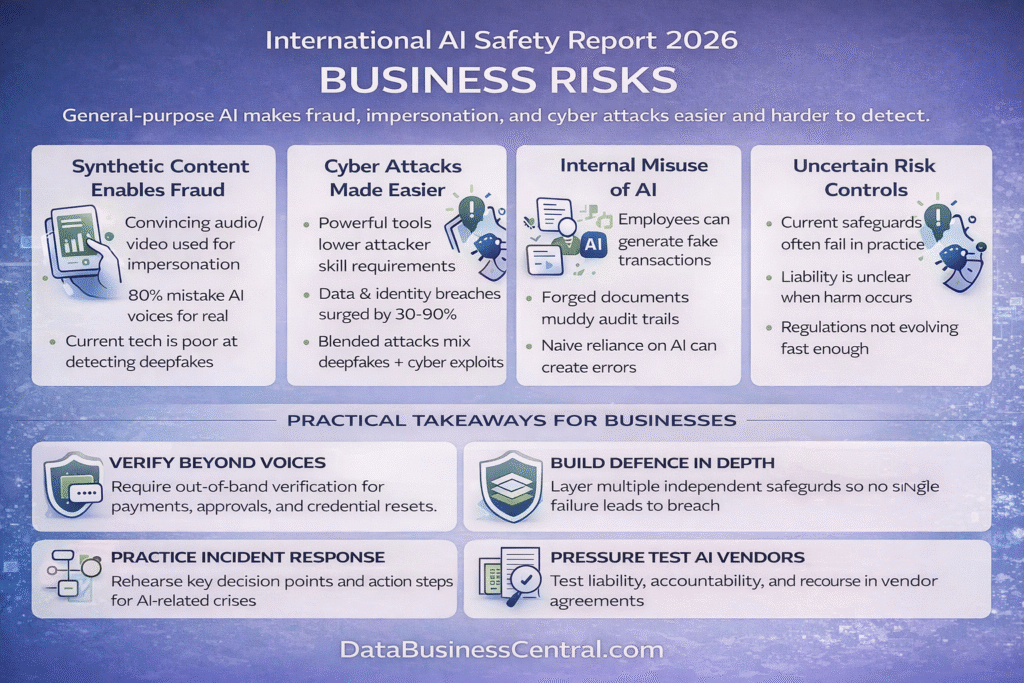

General-purpose AI is making fraud, impersonation, and cyber compromise cheaper, faster, and harder to attribute — and current safeguards do not reliably prevent harm.

The International AI Safety Report 2026 is framed as a scientific assessment to support policymaking. Produced by over 100 independent experts chaired by Turing Award winner Yoshua Bengio and backed by more than 30 countries, the EU, the OECD, and the UN, it is organised around three questions: what general-purpose AI can do today, what emerging risks it poses, and what risk management approaches exist — and how effective they are.

For business leaders, the immediate issue is misuse: AI-assisted content and operations that erode trust-based controls. The report notes the scale and unevenness of adoption — around 700 million weekly users of leading AI systems, with some countries above 50% usage, while parts of Africa, Asia, and Latin America likely remain below 10% — meaning risk maturity will vary across markets and third-party ecosystems.

Read through a business crime lens, the report points to three practical problems: it is easier than ever to defraud a corporate; it is harder than ever to check whether something is real; and it is unclear who you can hold accountable when harm occurs. Governance initiatives are proliferating — including EU work on general-purpose AI, the G7 Hiroshima AI Process reporting framework, and safety frameworks published by major developers — but evidence on the real-world effectiveness of most risk management measures remains limited.

Analysis

1. Synthetic Content Is a Direct Threat to Fraud Controls

The report highlights harmful incidents involving AI-generated content, particularly audio and video impersonation. Research cited in the report suggests listeners mistake AI-generated voices for real speakers 80% of the time, and it describes cases where cloned voices were used to persuade victims to transfer money by exploiting trust-based approval processes.

This is a real and immediate threat to businesses: a credible synthetic “executive” request to move funds, change supplier bank details, override approval steps, reset credentials, or share sensitive information. The risk is amplified by three features — low cost and low skill requirements, difficulty verifying authenticity under time pressure, and imperfect detection. The report notes the limits of technical fixes: watermarks and labels can help, but skilled actors can often remove them, and identifying where deepfakes originate remains technically difficult.

For boards and senior leadership, the practical implication is direct. Voice, video, and email can no longer be trusted for high-risk actions. Every organisation should assume that a credible-looking communication requesting urgent financial action may be synthetic. The trust model that relies on recognising a voice or a face is broken.

2. Cyber Operations Are Being Commoditised — Even Without Full Autonomy

The report devotes substantial attention to AI use in cyberattacks, noting that developers increasingly report misuse of their systems in cyber operations and that illicit marketplaces sell easy-to-use tools that lower attacker skill requirements. Data exfiltration volumes for major ransomware families surged nearly 93% in the first half of 2025, and identity-based attacks rose 32% in the same period.

The report is careful about overclaiming. Fully autonomous end-to-end cyberattacks have not been reported, and it is difficult to confirm whether real-world incident levels have increased because of AI. The more practical point is that blended attacks are becoming easier: synthetic content to gain initial access or authorisation, paired with AI-assisted exploitation and persistence.

The report offers a measured note of optimism — whether future AI capability improvements will benefit attackers or defenders more remains an open question. But that advantage will only materialise where organisations actively deploy AI in security and fraud detection, not merely in operations.

3. Malfunction, Misstatement, and Automation Bias Create Exposure

The business risk here is not only external victimisation. General-purpose AI can also enable fraud from within — employees, agents, and other associated persons generating credible fakes and muddying audit trails.

In the UK, this intersects directly with the “failure to prevent fraud” offence in force from September 2025 for large organisations, which focuses on whether an organisation had reasonable prevention procedures when an associated person commits specified fraud offences. AI-enabled methods — including fake approval requests, fabricated supporting documents, and high-volume communications — should now be explicitly considered in any fraud risk assessment.

The report’s discussion of reliability challenges and “automation bias” adds a separate exposure route. Where teams defer to AI-assisted outputs even when those outputs are wrong, organisations risk making decisions and statements that are harder to evidence and defend — including in regulatory disclosures, contractual representations, and dealings with counterparties.

4. Risk Management Is Improving, but Remediation Remains Uncertain

Over half of the report is dedicated to risk management practices. It observes that most risk management initiatives remain voluntary, but a few jurisdictions are beginning to formalise some practices as legal requirements.

The report’s assessment is cautious: current measures do not reliably prevent harm, and evidence of effectiveness in real-world conditions remains limited. It also highlights uncertainty over liability allocation, because harms can be difficult to trace to specific design choices and responsibilities are distributed across multiple actors in the AI supply chain.

The report stresses “defence-in-depth” — multiple independent layers of safeguards so that if one fails, others may still prevent harm — and “building societal resilience,” defined as the ability of systems to resist, absorb, recover from, and adapt to shocks. For businesses, the lesson is clear: rehearse AI incident response in practical detail — who leads, who has authority to pause or shut down affected systems, and how you will communicate externally when an incident occurs.

Practical Takeaways

Treat voice, video, and email as untrusted for high-risk actions. Payments, supplier changes, credential resets, and urgent approvals must require out-of-band verification through a separate, pre-established channel.

Build defence in depth with independent, layered safeguards. Dual approvals, call-backs via known numbers, friction for first-time or changed payees, time delays for high-value transactions — each layer should operate independently.

Run AI incident scenarios. Rehearse contested authenticity situations, rapid shut-down decisions, and evidence preservation before a real incident forces your hand.

Update fraud risk assessments to cover AI-enabled methods. Synthetic media, document fabrication, and insider misuse should now be standard items in any “reasonable procedures” programme, particularly under failure-to-prevent obligations.

Pressure-test accountability and recourse with AI vendors. Assume that remediation in the event of harm may be slow, partial, or impossible. Contract accordingly.

Conclusion

The International AI Safety Report 2026 is aimed at policymakers, but its message to business leaders is immediate: general-purpose AI means the barrier to entry for fraud and deception is lower than it has ever been. Synthetic content is breaking the trust-based controls that organisations have relied upon for decades. Cyber operations are being commoditised. Insider misuse is becoming harder to detect. And current safeguards do not reliably prevent harm.

At the same time, regulatory expectations are rising. Governance frameworks must be documented, risk assessments must address AI-specific threats, and controls must be tested and evidenced. The organisations best positioned for this environment are those that treat AI governance not as a standalone exercise but as a compliance obligation embedded in every framework they already operate under.

The sensible response is resilience: build defence in depth, treat AI-assisted fraud as part of your prevention programme, and rehearse how your business will respond to a critical incident before one forces the question.