AI-generated media has matured into a board-level threat. Here is how forward-thinking organizations are building the human, procedural, and technical defenses needed to stay ahead of it.

Why Deepfake Fraud Is Escalating Now

There is a specific reason 2024 and 2025 represent an inflection point in enterprise fraud risk — and it is not simply that AI has become more capable. It is that the cost of sophisticated deception has collapsed to nearly zero.

Until recently, creating a convincing audio or video clone of a real person required either significant technical expertise or expensive production infrastructure. Today, open-source generative adversarial network (GAN) models are freely available, voice cloning tools require only seconds of source audio, and the raw material — executive speeches, earnings calls, conference keynotes, media interviews — is archived publicly across the internet. Attackers now have access to industrial-grade deception at consumer-grade prices.

Critical Context

Gartner predicts that by 2026, 30% of enterprises will no longer consider standalone identity verification solutions to be reliable in isolation. This signals an industry-wide shift: the technology companies trusted for authentication is being systematically undermined by the same AI ecosystem that built it.

The result is a threat that exploits the very foundation of enterprise communication — the human presumption of authenticity when we hear or see a familiar face or voice. Social engineering attacks have always preyed on human psychology: urgency, authority, and trust. Deepfakes do not introduce a new psychological vulnerability; they dramatically amplify the potency of existing ones.

For enterprises specifically, this matters because the highest-value targets — finance teams authorizing wire transfers, HR staff managing access credentials, IT administrators with system privileges — are precisely the people who operate under authority-laden communication pressure daily. They are trained to act quickly when a senior leader calls. That trained responsiveness is the attack surface.

The Six Attack Vectors Targeting Enterprises

Deepfake fraud is not a single attack type. It is a tactic layer that augments existing fraud vectors — business email compromise, identity fraud, and social engineering — with synthetic media that erases traditional verification cues. Understanding the specific vectors is the prerequisite for building defenses.

1. Executive Voice Cloning (BEC 2.0)

Attackers harvest audio from publicly available sources — earnings calls, investor briefings, conference talks, podcast appearances — and use voice synthesis tools to clone the executive’s vocal signature. They then call finance or operations staff with a fabricated urgent request: a confidential wire transfer, an emergency vendor payment, a rapid credential handover. The combination of a familiar voice and manufactured urgency bypasses standard skepticism with alarming reliability. This represents the evolution of Business Email Compromise into a channel that feels far more personal and trustworthy than email ever did.

2. Synthetic Video Meeting Attacks

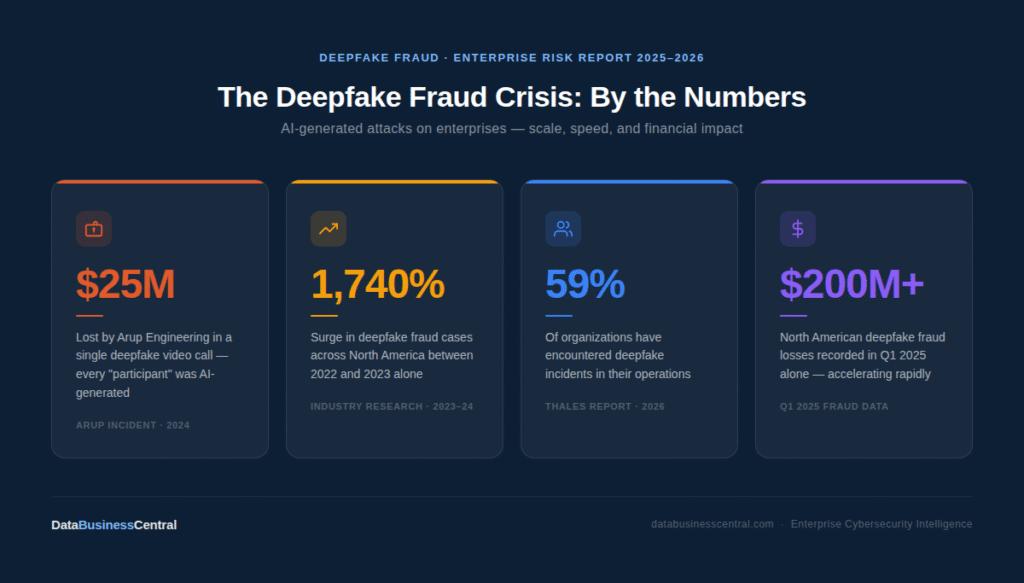

Deepfake video filters can be applied in real time to video conferencing sessions — the attacker appears as a known executive during a live Teams or Zoom call, complete with plausible facial movement and synchronized voice. This vector is particularly dangerous because employees have been conditioned to treat video as more trustworthy than text, and because organizations have not yet adopted the equivalent of an email spam filter for synthetic video. The 2024 Arup incident — a $25 million loss following a video call where every participant was AI-generated — remains the most striking illustration of this vector’s destructive potential.

3. KYC and Identity Verification Bypass

Deepfake face-swap technology is being used to defeat biometric identity verification systems at scale. Synthetic faces tied to fabricated document identities allow fraudsters to pass KYC checks at financial institutions, open corporate accounts, and infiltrate vendor onboarding systems. The cryptocurrency and fintech sectors have borne the brunt of this vector, accounting for an estimated 88% of all detected deepfake fraud cases in recent years, with the broader fintech industry reporting a 700% increase in such incidents.

4. Investor and Market Manipulation

Fabricated audio or video of a CFO or CEO announcing false earnings data, a pending acquisition, or a significant strategic pivot can be strategically leaked to manipulate stock prices or destabilize partners and suppliers. The reputational and legal exposure from this vector extends well beyond the immediate financial loss — it strikes at the credibility of the C-suite itself and can trigger regulatory investigations even when the organization is the victim.

5. Cross-Channel Spear-Phishing Amplification

The most sophisticated attacks do not rely on a single deepfake in isolation. Instead, they build a cross-channel narrative: a fraudulent email establishes the pretext, a deepfake voice call “confirms” it, and a fabricated document completes the authorization chain. Each layer reinforces the others, dramatically reducing the probability that any single skeptical employee will break the chain. Cross-channel attack construction is now a documented tactic in enterprise fraud cases.

6. Brand Impersonation and Malicious Advertising

AI-generated videos of company executives endorsing fraudulent products, cryptocurrency scams, or phishing sites are run as paid advertisements on social media platforms, targeting the brand’s own customer base. This vector simultaneously defrauds customers and damages the brand’s reputation in ways that are extraordinarily difficult to contain once the content is in distribution.

Real-World Incidents: What Actually Happened

Arup Engineering — Hong KongFebruary 2024

Loss: $25 Million USD

A finance employee at the global engineering firm was invited to a video conference call. Every other participant — including the individual presenting as the company’s Chief Financial Officer — was an AI-generated deepfake constructed from publicly available footage. The call appeared entirely legitimate. The employee authorized a series of wire transfers totaling $25 million before the fraud was discovered. This incident redefined what enterprises must now treat as a credible threat scenario in their fraud controls.

Wiz CEO Voice Clone2024

Outcome: Credentials Targeted, Attack Repelled

Attackers sourced audio from a public conference appearance by Wiz CEO Assaf Rappaport and used it to clone his voice for a targeted spear-phishing call against employees. The goal was credential theft. The attack failed because staff noticed the communication did not sound like his usual manner and tone — a textbook example of how employee awareness training, not just technology, intercepted a sophisticated attack at the final moment.

Ferrari Executive ImpersonationJuly 2024

Outcome: Suspicious Behavior Flagged by Target

A Ferrari executive received WhatsApp messages followed by a phone call from someone impersonating CEO Benedetto Vigna using an AI-generated voice and photo. The impostor discussed a supposed confidential acquisition requiring immediate action. The executive grew suspicious because of subtle mechanical tones in the voice and contextual inconsistencies — demonstrating that human-layer detection, even at the executive level, requires deliberate cultivation and clear protocols for what to do with suspicion once it arises.

Common Thread

In every successful deepfake attack, the breach occurred at the intersection of technical sophistication and procedural absence. The technology worked. What failed was the human process designed to catch what technology cannot. In every prevented attack, either employee awareness or an out-of-band verification step caught what automated systems missed.

A Layered Detection Architecture

No single detection technology reliably catches all deepfakes — a fact that cannot be overstated. Current AI classifiers lose up to 50% of their accuracy when tested against real-world deepfake videos (versus controlled lab environments). The strategic implication is clear: detection must be layered, not reliant on any single tool or method.

| Layer | What It Detects | Reliability | Key Limitation |

|---|---|---|---|

| AI Media Analysis GANs detection, liveness checks, facial artifact scanning | Facial inconsistencies, lip-sync mismatches, skin texture anomalies, blood flow patterns | 90–97% (lab) | Accuracy drops sharply on real-world footage; bad actors actively tune against known detectors |

| Behavioral Biometrics Speech cadence, typing patterns, device fingerprinting | Deviations from established behavioral baselines for known individuals | High (adaptive) | Requires baseline data; new employees or unknown contacts provide no reference point |

| Metadata & Provenance File signatures, compression artifacts, C2PA standards | Manipulation history, device of origin, edit timestamps, GAN fingerprints | Moderate–High | Sophisticated attackers can strip metadata; provenance standards adoption remains low |

| Real-Time Call Monitoring VoIP analysis, video conferencing integration | Synthetic audio patterns during live calls; adversary-in-the-middle attacks | Emerging | Latency constraints in live environments; integration with conferencing platforms varies widely |

| Dark Web Intelligence Underground marketplace monitoring, brand impersonation alerts | Executive audio/video being traded or prepared for use; emerging attack campaigns | High (early warning) | Upstream intelligence only; does not stop an attack already in motion |

| Human Recognition Employee training, awareness, contextual skepticism | Behavioral anomalies, contextual inconsistencies, unusual urgency, off-script responses | Variable (training-dependent) | Fatigues under sustained pressure; inconsistent without ongoing reinforcement |

The practical implication of this architecture is that enterprises must invest across multiple layers simultaneously, with a particular emphasis on the procedural layer — because it is the one layer that functions even when every technology-based layer fails.

The Prevention Framework: Six Strategic Pillars

Effective deepfake prevention is not a product you can purchase. It is an organizational capability you have to build — spanning technology configuration, workflow redesign, cultural change, and governance. Below is the six-pillar framework DataBusinessCentral recommends as a foundation.

Pillar 1

Harden Authentication Infrastructure

- Deploy phishing-resistant MFA (FIDO2/passkeys) across all channels

- Implement Zero Trust with continuous session verification

- Replace biometrics-only verification with multi-modal checks

- Add environmental and cross-device validation for remote onboarding

- Integrate real-time deepfake detection into video conferencing

Pillar 2

Rebuild Verification Workflows

- Mandate out-of-band confirmation for wire transfers and credential changes

- Establish pre-agreed code word systems for executive-to-employee contact

- Enforce multi-person authorization on all high-value transactions

- Create explicit “pause and verify” protocols triggered by urgency cues

- Treat video and audio as unverified by default — same as email

Pillar 3

Build a Skeptical Culture

- Run deepfake-specific simulation exercises, not generic phishing drills

- Train finance, HR, and IT teams to recognize synthetic media artifacts

- Reward staff who flag suspicious communications — normalize the pause

- Conduct executive briefings on personal exposure from public audio/video

- Make “I need to verify this separately” a culturally supported response

Pillar 4

Reduce Executive Digital Footprint

- Audit and minimize publicly archived executive audio and video

- Apply C2PA content authentication to all official communications

- Monitor for unauthorized executive likeness use online

- Watermark official media at source for future provenance verification

- Develop communications policies that limit unguarded long-form exposure

Pillar 5

Institutionalize Governance

- Elevate deepfake fraud to board-level risk (not solely an IT matter)

- Establish a dedicated deepfake incident response policy and owner

- Monitor EU AI Act and US regulatory developments for compliance obligations

- Conduct annual third-party deepfake penetration testing

- Document your detection and response posture for insurer and regulator review

Pillar 6

Extend Controls to the Supply Chain

- Require comparable deepfake verification standards from vendors and partners

- Include deepfake protocol clauses in supplier communication agreements

- Vet detection vendors on real-world accuracy, not only benchmark scores

- Avoid sole reliance on any single identity verification solution

- Establish shared incident intelligence-sharing with industry peers

DBC’s Unique Strategic Lens: Procedural Resilience Over Technology Dependence

Most enterprise security conversations about deepfakes default quickly to tool discussions: which detection platform, which AI classifier, which identity verification vendor. DataBusinessCentral takes a deliberately different view, grounded in what the data actually shows about how attacks succeed and fail.

“Any security strategy that relies solely on buying the latest detection tool is flawed and destined to fail. The strategic focus must shift from pure technology to procedural resilience — building verification processes that can withstand deception even if a deepfake is technically perfect.”— DeepStrike Research, Deepfake Statistics Report 2025

Our unique strategic recommendation is this: design your fraud controls as if your deepfake detection tools will fail — because eventually, against a sufficiently sophisticated and targeted attacker, they will. The defenses that have stopped real attacks are not the AI classifiers. They are the employees who paused because something felt slightly wrong. They are the code word systems that the attacker did not know. They are the dual-authorization workflows that required a second human to independently confirm.

The “Technically Perfect Deepfake” Scenario

Imagine a scenario where an attacker has constructed a deepfake that defeats every automated detection layer you have deployed — the GAN signatures are scrubbed, the liveness check passes, the voice biometrics are indistinguishable from the genuine article. Your technology stack says: authentic. In this scenario, which is not hypothetical but increasingly probable, your only remaining defense is procedural.

This means designing workflows where no single communication — regardless of its apparent origin or medium — can authorize a high-risk action without independent corroboration. It means treating the medium itself as inherently untrustworthy and placing the verification burden on the action, not the communicator.

The “Verify the Action, Not the Person” Principle

Traditional security thinking focuses on verifying identity: who is making this request? The deepfake problem requires a complementary principle: verify the action regardless of who appears to be requesting it. A wire transfer above a defined threshold should require multi-person authorization and an out-of-band confirmation step — not because we distrust the executive, but because the executive’s identity can now be forged with frightening fidelity. The control is on the action, not the actor.

DBC Strategic Insight

Organizations that have successfully repelled sophisticated deepfake attacks share one characteristic: they had pre-established, independent verification channels that the attacker could not replicate or anticipate. A secret code word. A physical confirmation step. A callback to a number stored in the corporate directory, not the number that called you. These low-technology controls are, paradoxically, the highest-leverage defenses available right now.

Why Urgency Is Always the Attack Vector

Review every documented enterprise deepfake fraud case and one constant emerges: the attack always includes an urgency construct. A deal closing today. A compliance deadline this afternoon. A wire that must clear before markets open. Urgency is not incidental — it is load-bearing architecture in the attack, because urgency is the most effective mechanism for bypassing the pause-and-verify instinct.

This means that urgency itself should function as a security trigger, not a compliance accelerant. Every process that can be expedited under claimed urgency is a risk surface. Every time an employee feels pressure to skip a step, the attacker has successfully reached the most important moment in their attack chain. Training employees to recognize urgency as a red flag — and explicitly empowering them to slow down without career consequences — is among the highest-return security investments available.

Incident Response Playbook

When a suspected deepfake attack occurs or is discovered after the fact, the quality of the organization’s response in the first 72 hours determines the magnitude of the outcome. The following is the response framework DataBusinessCentral recommends.

1. 0–15 Minutes

Immediate Containment

Halt all pending financial transactions or access approvals triggered by the suspicious communication. Isolate the communication channel. Do not delete anything — preserve all call recordings, emails, video files, and metadata as forensic evidence. Notify the direct supervisor of the targeted employee.

2. 15–60 Minutes

15–60 Minutes

Out-of-Band Verification

Contact the purported sender through a completely separate, pre-established channel — not a reply to the suspicious communication. Use a known direct number from your corporate directory or an in-person check. Simultaneously, engage your deepfake detection tooling to begin media analysis on any preserved files.

3 1–4 Hours

Internal Escalation

Alert CISO, legal counsel, and corporate communications teams. If financial fraud is confirmed, engage your bank’s fraud team immediately — transaction recall windows are narrow. Brief affected department heads without broader organizational disclosure pending investigation scope confirmation.

4–72 Hours

Forensic Investigation

Engage deepfake forensics specialists for technical media analysis. Map the full attack chain: which public sources were used, how long the attacker prepared, which employees were targeted (the visible target may not be the only one). Determine whether any credentials, data, or system access may have been compromised beyond the immediate incident.

5. 72 Hours+

Regulatory Reporting and External Disclosure

Report to law enforcement (FBI IC3, local cybercrime units) and relevant financial regulators per applicable breach notification obligations. Coordinate legal counsel on EU AI Act or FTC notification requirements where applicable. Issue carefully managed internal staff communications and external statements if warranted by the attack’s nature or scale.

6 .Ongoing

Post-Incident Hardening

Conduct a thorough review of every procedural gap the attacker exploited. Update authentication workflows and approval thresholds. Run targeted staff training using the actual incident as a case study (anonymized as appropriate). Publish an internal lessons-learned report. Update your threat detection models with indicators of compromise from this specific attack.

Field Guide: Spotting a Deepfake in Real Time

Technology detection systems are the primary line of defense, but the human layer catches what they miss. These are the observable signals that should prompt an employee to pause and verify independently.

Video Call Indicators

- Unnatural eye blinking patterns or fixed gaze that does not track naturally

- Lip movement that is slightly ahead of or behind the spoken audio

- Hairline, ear, or jaw edges that appear blurred, flickering, or inconsistent

- Facial expressions that feel delayed or do not match the emotional weight of the words

- Lighting or shadow on the face that is inconsistent with the visible background

- Glasses, jewelry, or accessories that warp, flicker, or behave unnaturally

Voice and Audio Indicators

- Slight mechanical, processed, or flat undertone beneath the voice

- Unusual pauses or unnatural rhythmic cadence in speech

- Background environment sounds that are absent, inconsistent, or artificially clean

- Word choices or phrasing that do not match the individual’s known communication style

- Refusal or inability to engage in spontaneous off-script conversation or answer unexpected questions naturally

Behavioral and Contextual Red Flags

- Unusual urgency or extreme time pressure applied to a request

- Request routes around standard approval channels or asks to keep it confidential

- Explicit instruction not to contact anyone else or to keep the matter secret

- The request’s context does not align with current known business activities or priorities

- Communication style, tone, or vocabulary noticeably different from the person’s normal manner

The Golden Rule

Any communication — regardless of medium, regardless of the seniority of the apparent sender — that requests a financial transfer, credential change, system access modification, or sensitive disclosure should be verified through a completely separate, pre-established channel before any action is taken. The more urgent the request, the more essential the verification step. Urgency is a manipulation tactic, not a reason to bypass controls.

What’s Coming: The Next 24 Months

The deepfake threat landscape is not static. The following developments represent the near-term trajectory that enterprise security teams should be building toward today.

Automated Attack Factories

As generative AI tooling continues to improve, the labor cost of constructing a targeted deepfake attack will approach zero. What currently requires some degree of manual crafting will be automated: scrape public executive media, generate voice clone, construct attack narrative, deploy at scale across target employee list. This automation shift means that deepfake attacks will move from being exclusively high-effort, high-value targeted operations to also including high-volume, lower-effort campaigns — the deepfake equivalent of phishing automation.

Multi-Modal Attack Fusion

Current attacks typically rely on one primary synthetic medium — voice or video — supported by conventional social engineering elements. The next generation will fuse multiple synthetic modalities simultaneously: a deepfake video call accompanied by forged document delivery through a compromised-looking portal, with voice cloning layered over the video to reinforce the illusion. The combined effect will be harder to interrupt with any single detection layer.

Regulatory Catch-Up

The EU AI Act explicitly addresses synthetic media and is likely to impose new obligations on enterprises regarding deepfake disclosure, detection infrastructure, and incident reporting. US regulatory frameworks are developing in parallel. Organizations that build their detection and governance capabilities proactively will be significantly better positioned than those who wait for compliance mandates to arrive.

Detection Technology Arms Race

The deepfake detection market is projected to grow at 42% annually, from $5.5 billion in 2023 to over $15 billion by 2026. This investment will drive meaningful improvements in real-time detection accuracy and integration into enterprise communication stacks. But as Deloitte’s analysis observes, the dynamic closely mirrors the broader cybersecurity landscape — a perpetual arms race where defenders and attackers continuously adapt to one another. Technology investment is necessary but never sufficient.

Executive Action Checklist

For CISO, CFO, and CEO teams looking for an immediate-action summary, the following checklist represents the minimum viable posture for enterprises in 2026.

30-Day Priority Actions

These are the highest-leverage steps that can be implemented without major technology procurement or extended timelines.

- Establish out-of-band verification for all wire transfers and access changes— a mandatory callback or in-person confirmation to a pre-stored number, no exceptions above a defined threshold

- Create a secret code word systemfor C-suite to finance team communication — a shared phrase known only internally that the caller must volunteer without prompting

- Run a deepfake simulation exercisewithin 30 days targeting your finance, HR, and IT teams specifically — not a generic phishing drill

- Conduct an executive media audit— identify all publicly available audio and video of your senior leadership and assess what a sophisticated attacker could construct from it

- Formally elevate deepfake fraud to the board agendaand assign an owner for detection and response capability development

- Review your current identity verification vendor— ensure they have documented real-world deepfake detection performance, not only benchmark accuracy claims

- Assess your incident response plan— confirm it explicitly addresses deepfake scenarios, defines containment steps, and identifies who contacts the bank’s fraud team and when

Deepfake fraud is not a future threat to prepare for. It is a present operational reality that is already claiming enterprise victims at scale. The organizations that will navigate this environment successfully are those that treat procedural resilience as the foundation, technology detection as a force multiplier, and employee judgment as the final and irreplaceable line of defense. The question is not whether your organization will face a sophisticated deepfake attempt. The question is whether your people and your processes will be ready when it arrives