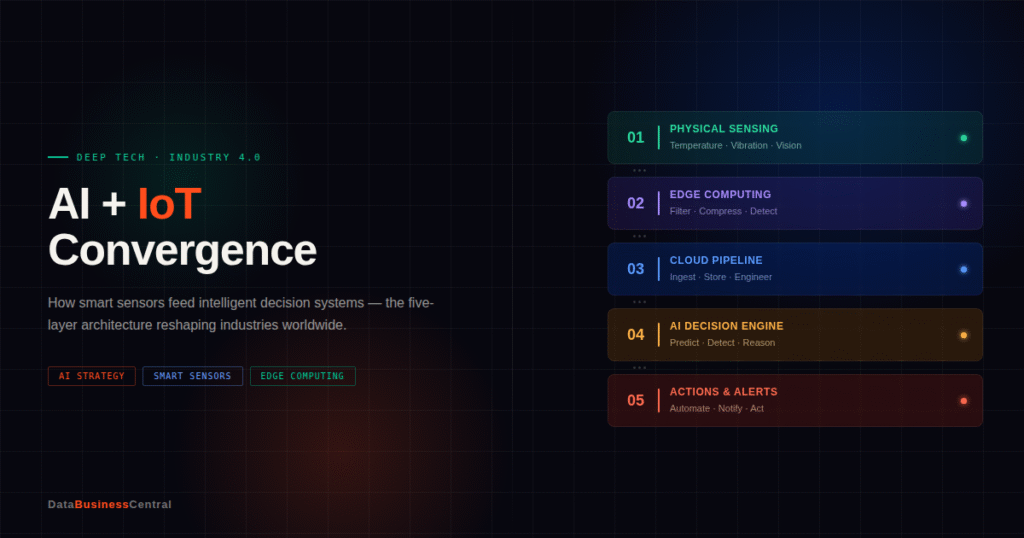

The real revolution isn’t the sensor or the model — it’s the architecture connecting the two. A deep dive into the five-layer stack transforming industries worldwide.

The internet of things promised a world of connected devices. Artificial intelligence promised machines that could think. The real revolution isn’t either technology alone — it’s what happens when they meet.

The internet of things promised a world of connected devices. Artificial intelligence promised machines that could think. The real revolution isn’t either technology alone — it’s what happens when they meet.

The core problem

From dumb sensor to smart signal

Early IoT deployments had a fundamental problem: they generated more data than anyone could meaningfully process. A single factory floor might produce terabytes of sensor readings daily, most of it unremarkable — and inside that ocean of unremarkable data, the critical anomaly you cared about was almost impossible to find manually.

AI changes the math entirely. Instead of asking a human analyst to review dashboards, machine learning models learn what “normal” looks like for a given sensor in a given context, and flag deviations automatically. The sensor is no longer just recording; it’s contributing to a judgment.

Today, billions of sensors are generating data about temperature, pressure, motion, power consumption, and dozens of other variables every second. Without intelligence, all that data is noise. Without data, AI models have nothing to act on. The convergence of the two is quietly reshaping how industries operate, how cities function, and how decisions get made in real time.

$50B -Annual cost of unplanned downtime in US manufacturing alone, the primary driver of predictive maintenance investment

75% -Reduction in unexpected equipment breakdowns reported by deployments with mature AI-driven predictive maintenance

30% -Energy cost reduction achieved by smart building systems combining IoT sensor grids with AI optimization models

The architecture in detail

What’s happening at each layer

Layer 1 — Physical sensing. This is where the world gets quantified. Temperature sensors in a cold chain, vibration sensors on industrial motors, cameras in retail spaces, pressure gauges in pipelines. The common thread is that physical phenomena get converted into digital signals. The sophistication of modern sensors — MEMS accelerometers, hyperspectral cameras, solid-state gas detectors — means we are quantifying aspects of the physical world that were previously unobservable at any cost.

Layer 2 — Edge computing. Rather than shipping every raw reading to the cloud, modern deployments process data locally, right at or near the sensor. Edge devices filter out clean readings, compress data, and run lightweight models capable of flagging urgent anomalies immediately — without waiting for a round-trip to the cloud. A motor that starts vibrating unusually doesn’t need to wait for a server two thousand miles away to notice.

Layer 3 — Cloud data pipeline. The cleaned, filtered stream arrives in the cloud, where it gets ingested into time-series databases, enriched with contextual data (weather, maintenance logs, shift schedules), and transformed into the features that AI models actually need. This is also where historical data accumulates — the training ground for future models and the foundation for meaningful trend analysis.

Layer 4 — AI decision engine. This is where intelligence lives. Predictive models forecast whether a motor will fail in the next 72 hours. Anomaly detection flags readings that fall outside learned baselines. Reinforcement learning agents adjust HVAC systems in real time to minimize energy consumption. Large language models synthesize sensor data with maintenance records and produce natural-language summaries for technicians who don’t speak data science.

Layer 5 — Actions and alerts. Intelligence without action is just analysis. The final layer closes the loop: automated responses and human-facing alerts that surface only what genuinely needs a person’s attention. The feedback from those actions becomes training data, and the models get smarter over time.

The sensor and the model are converging toward a single system — one that perceives, reasons, and acts with increasing autonomy.

Real-world deployment

Where this is already working

These aren’t projections. They’re documented outcomes from deployments that have been running long enough to measure.

Industrial

Predictive maintenance

Manufacturers use vibration, temperature, and acoustic sensors on equipment to predict failures days or weeks before they happen. The AI isn’t just detecting anomalies — it correlates patterns across hundreds of machines to learn the signature of a bearing about to fail.

Energy

Smart energy grids

Thousands of grid nodes, weather feeds, and consumption patterns feed AI dispatch systems that balance load in real time — rerouting power, anticipating demand spikes, and integrating intermittent renewable sources far more efficiently than rule-based systems.

Agriculture

Precision farming

Soil moisture sensors, drone imagery, and satellite data feed AI models that recommend exactly when to irrigate, fertilize, or harvest — reducing waste and improving yields. The sensor isn’t just a thermometer; it’s a node in a system that knows the whole farm.

Healthcare

Clinical wearables

Continuous glucose monitors, ECG patches, and oxygen sensors feed AI models that detect atrial fibrillation, predict hypoglycemic events, or flag early signs of sepsis in hospitalized patients — before clinical symptoms are observable.

The hard problems

What the frontier looks like

The convergence isn’t frictionless. A few challenges define where the serious engineering work is happening right now.

- 01Data quality and model driftAI models are only as good as the data they’re trained on. Sensors degrade, get miscalibrated, or operate in conditions that shift over time. A model trained on summer behavior can fail silently in winter. Continuous monitoring of model performance — not just sensor health — is an emerging discipline that lags far behind sensor deployment.

- 02Latency versus accuracy trade-offsEdge models are fast but limited in complexity. Cloud models are powerful but introduce latency. Deciding which decisions need to happen in milliseconds versus seconds versus minutes is an architectural judgment that varies enormously by application — and getting it wrong in either direction has real consequences.

- 03Security and attack surfaceSensors collect intimate data about behavior, health, location, and industrial operations. Every additional connection point is a potential attack vector. The more intelligent the system, the more damaging a compromise becomes — an adversary who can manipulate sensor readings can corrupt model decisions downstream.

- 04Hardware fragmentationThe IoT hardware landscape is deeply fragmented. Protocols like MQTT, CoAP, and Matter have helped, but getting sensors from a dozen manufacturers to feed coherently into a single AI pipeline still requires significant engineering effort — and that cost is often invisible until deployment.

What’s coming next

Three shifts to watch

The next wave of AI-IoT convergence is moving beyond reactive intelligence toward something closer to autonomous operation.

Digital twins

AI models that maintain real-time simulations of physical systems — sophisticated enough to run what-if scenarios before taking action in the real world.

Foundation sensor models

Models trained on massive, multi-domain sensor datasets are beginning to generalize across industries the way LLMs generalize across text — without domain-specific fine-tuning.

Autonomous closed loops

Systems that not only detect and alert but reconfigure themselves — rebalancing grids, rerouting logistics, adjusting manufacturing tolerances — with decreasing human involvement.

The business case, plainly stated

The argument for AI-IoT convergence is fundamentally economic. Predictive maintenance systems routinely achieve 20–30% reductions in maintenance costs and 70–75% reductions in unexpected breakdowns. In cold chain logistics, real-time temperature monitoring with AI-driven alerts can prevent millions in spoiled inventory annually. In commercial real estate, smart building systems are cutting energy costs by 15–30%.

These aren’t projections. They’re documented outcomes from deployments that have been running long enough to measure. The question for business leaders is no longer whether the technology works — it’s whether your organization has the data infrastructure and architectural clarity to deploy it effectively.

The businesses that understand the five-layer stack connecting sensor to decision will be the ones positioned to compound those advantages as the models and the hardware continue to improve together.